Listen instead

Learning Objectives

- ✓ Define the Secure Software Development Lifecycle and articulate why security must be embedded at every phase

- ✓ Map security activities to each SDLC phase from requirements through retirement

- ✓ Explain CIS Safeguard 16.1 requirements and the six mandatory documented areas

- ✓ Compare major SSDLC frameworks (NIST SSDF, OWASP SAMM, Microsoft SDL, BSIMM, CISA Secure by Design, SAFECode)

- ✓ Identify the 10 required governance documents for an SSDLC program

- ✓ Design an AI acceptable use policy with three-tier tool classification

- ✓ Establish an annual review and continuous improvement cycle

1. What Is SSDLC and Why It Matters

Figure: Foundations & Governance Overview — Track 1 coverage of SSDLC process, policy, and governance fundamentals

Figure: Foundations & Governance Overview — Track 1 coverage of SSDLC process, policy, and governance fundamentals

The Secure Software Development Lifecycle (SSDLC) is the practice of integrating security considerations, activities, and controls into every phase of software development — from initial requirements gathering through design, implementation, testing, release, operational response, and eventual retirement. It is not a separate process bolted on after development; it is the development process itself, executed with security as a first-class concern.

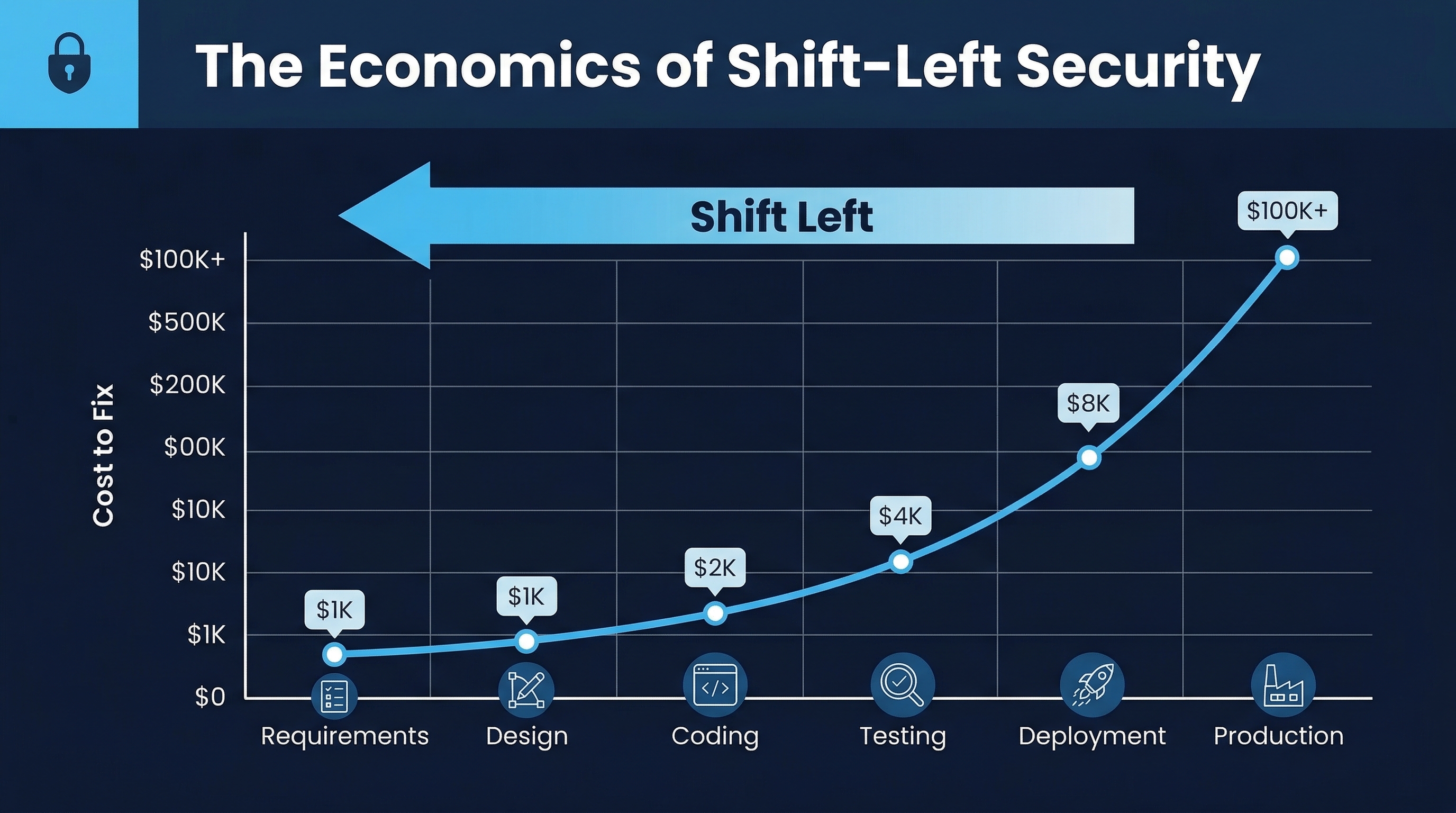

The Economics of Defect Remediation

The single most compelling argument for SSDLC adoption is economic. Research from IBM Systems Sciences Institute, the National Institute of Standards and Technology (NIST), and multiple industry studies consistently demonstrates an exponential cost curve for fixing security defects:

| Phase Discovered | Relative Cost | Example (at $1,000 base) |

|---|---|---|

| Requirements / Design | 1x | $1,000 |

| Implementation (coding) | 5–6x | $5,000–$6,000 |

| Integration Testing | 10x | $10,000 |

| System / E2E Testing | 15–40x | $15,000–$40,000 |

| Production / Post-Release | 30–100x | $30,000–$100,000 |

| Post-Breach (incident + regulatory) | 640x+ | $640,000+ |

A SQL injection vulnerability caught during design review costs a conversation. The same vulnerability discovered after a breach costs incident response, forensics, legal counsel, regulatory notification, potential fines, customer notification, credit monitoring, brand damage, and lost revenue. The Ponemon Institute’s 2024 Cost of a Data Breach report pegs the average breach cost at $4.88 million — and breaches caused by application vulnerabilities consistently rank among the most expensive categories.

The Shift-Left Imperative

“Shift left” is the principle of moving security activities as early as possible in the development lifecycle. When security requirements are defined alongside functional requirements, when threat models are built alongside architecture diagrams, when secure coding standards are enforced during implementation rather than discovered during penetration testing — the result is software that is secure by construction rather than secure by accident.

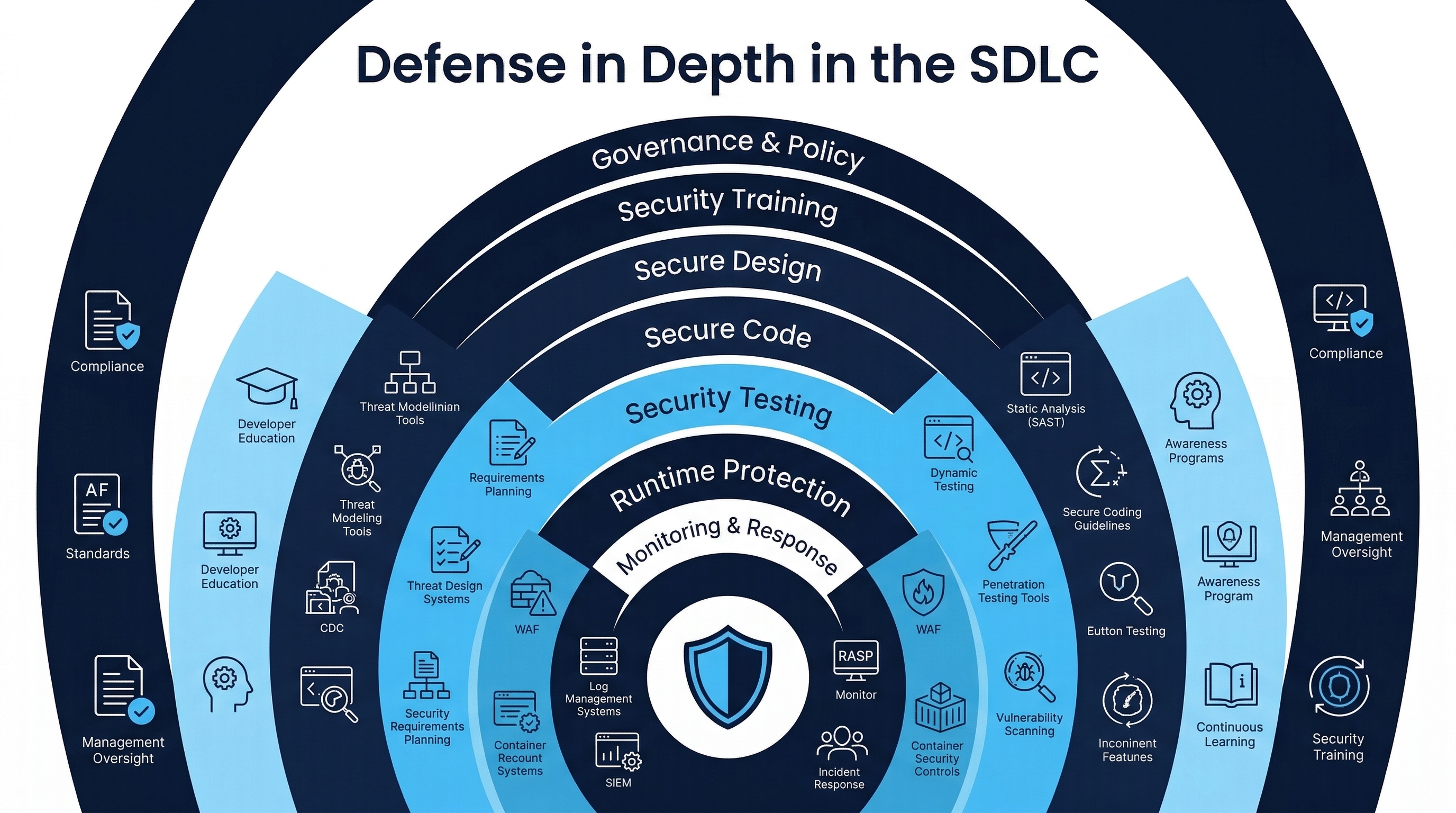

Shift-left does not mean shift-only-left. A mature SSDLC operates security activities at every phase, creating defense in depth within the development process itself. Early phases prevent classes of vulnerabilities. Middle phases catch what prevention missed. Late phases validate the entire system. Post-release phases handle what escaped everything.

Figure: Shift-Left Security Economics — Cost multiplier of defect remediation across SDLC phases

Figure: Shift-Left Security Economics — Cost multiplier of defect remediation across SDLC phases

Figure: Defense in Depth in the SDLC — Layered security activities across all development phases

Figure: Defense in Depth in the SDLC — Layered security activities across all development phases

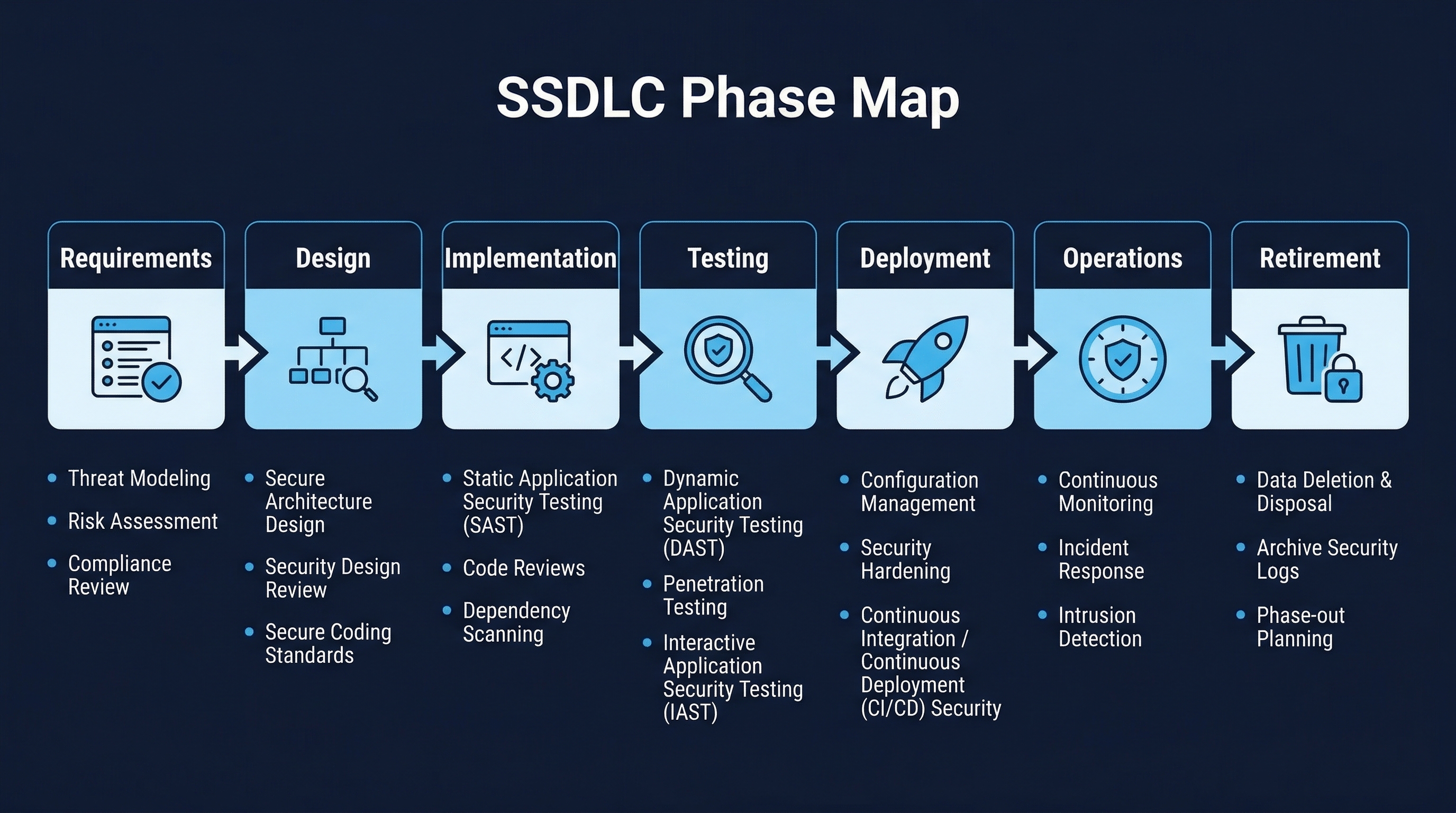

2. SDLC Phases Mapped to Security Activities

Every development methodology — waterfall, agile, DevOps, SAFe — passes through the same fundamental phases, even if the cadence and formality differ. The security activities for each phase are:

Figure: SSDLC Phase Map — Visual overview of all lifecycle phases with security activities

Figure: SSDLC Phase Map — Visual overview of all lifecycle phases with security activities

Phase 1: Requirements

Security Activities:

- Security requirements elicitation (functional and non-functional)

- Abuse case / misuse case development

- Data classification of all data the application will handle

- Compliance requirement identification (PCI, HIPAA, SOC 2, GDPR, EU AI Act)

- Risk appetite definition and acceptance criteria

- Privacy impact assessment initiation

- AI tool usage policy for the project (which tools, which data classifications)

Key Artifacts: Security requirements document, data classification matrix, compliance checklist, AI tool authorization list

Phase 2: Design

Security Activities:

- Threat modeling (STRIDE, PASTA, Attack Trees, LINDDUN for privacy)

- Security architecture review

- Cryptographic design review

- Authentication and authorization design

- Trust boundary identification

- Secure design pattern selection

- API security design

- AI-generated design validation (if AI tools assisted in architecture)

Key Artifacts: Threat model document, security architecture diagram, trust boundary map, design review sign-off

Phase 3: Implementation

Security Activities:

- Secure coding standard enforcement

- Static Application Security Testing (SAST) — integrated into IDE and CI

- Software Composition Analysis (SCA) — dependency vulnerability scanning

- Secret detection (pre-commit hooks, CI pipeline scanning)

- Code review with security focus

- AI-assisted code review and validation

- AI-generated code security review (treat as untrusted contributor)

- License compliance scanning for AI-suggested dependencies

Key Artifacts: SAST scan results, SCA reports, code review records, secret scan results

Phase 4: Verification

Security Activities:

- Dynamic Application Security Testing (DAST)

- Interactive Application Security Testing (IAST)

- Penetration testing (manual and automated)

- Security regression testing

- Fuzz testing

- API security testing

- Configuration review

- SBOM generation and validation

Key Artifacts: DAST reports, penetration test report, SBOM, security test results

Phase 5: Release

Security Activities:

- Final security review / release gate

- Security sign-off from designated authority

- Vulnerability scan of deployment artifacts (container images, infrastructure-as-code)

- Change management approval with security attestation

- Deployment configuration hardening verification

- Incident response plan validation

- Runtime protection enablement (WAF rules, RASP, monitoring)

Key Artifacts: Release security checklist, deployment scan results, security sign-off record

Phase 6: Respond

Security Activities:

- Vulnerability monitoring and triage

- Security patch management

- Incident response execution

- Bug bounty / vulnerability disclosure program

- Runtime monitoring and alerting

- Security event correlation

- Post-incident review and lessons learned

Key Artifacts: Vulnerability tracking records, incident reports, patch records, monitoring dashboards

Phase 7: Retire

Security Activities:

- Data retention compliance verification

- Secure data destruction

- Credential and secret revocation

- Dependency notification (downstream consumers)

- DNS and certificate cleanup

- Archive security for audit/compliance requirements

- AI model and training data disposition (if applicable)

Key Artifacts: Decommission checklist, data destruction certificate, credential revocation log

3. CIS Safeguard 16.1 — Establish and Maintain a Secure Application Development Process

Official Description

“Establish and maintain a secure application development process. In the process, address such items as: secure application design standards, secure coding practices, developer training, vulnerability management, security of third-party code, and application security testing procedures. Review and update documentation annually, or when significant enterprise changes occur that could impact this Safeguard.”

Asset Type and Security Function

- Asset Type: Applications

- Security Function: Govern

- Implementation Group: IG2

The Six Required Areas

CIS 16.1 explicitly requires documented processes covering six specific areas. Each must be addressed with sufficient detail to be actionable by development teams:

Area 1: Secure Application Design Standards

Your design standards document must define:

- Mandatory threat modeling methodology and when it is triggered (new application, major feature, architecture change)

- Approved authentication patterns (OAuth 2.0 / OIDC flows, session management, MFA requirements by data classification)

- Authorization models (RBAC, ABAC, ReBAC) and when each is appropriate

- Cryptographic standards (approved algorithms, key sizes, key management requirements)

- Input validation and output encoding requirements

- Secure API design patterns (rate limiting, authentication, versioning)

- Logging and monitoring requirements (what to log, what never to log, format, retention)

- Error handling standards (no stack traces in production, generic error messages to users, detailed logging internally)

Area 2: Secure Coding Practices

Your coding standard must address, at minimum:

- Language-specific secure coding guidelines (CERT standards for C/C++/Java, language-specific OWASP guidance)

- Input validation requirements (allowlist over denylist, parameterized queries, context-appropriate encoding)

- Authentication and session management implementation standards

- Cryptographic implementation requirements (use vetted libraries, never roll your own)

- Error handling and logging standards

- File and resource handling

- Memory management (for applicable languages)

- Concurrency and race condition prevention

- AI-generated code standards (mandatory review, testing requirements, provenance tracking)

Area 3: Developer Training

Training requirements must specify:

- Initial training for new developers (content, duration, assessment)

- Annual refresher training (minimum content, assessment criteria)

- Role-specific training (developers, architects, security champions, QA, DevOps)

- Language and framework-specific training

- Emerging threat training (updated for current year’s threat landscape)

- AI tool security training (secure use of coding assistants, prompt injection awareness, data handling)

- Training records retention and audit evidence

Area 4: Vulnerability Management

The vulnerability management process must define:

- Vulnerability intake sources (SAST, DAST, SCA, pen tests, bug bounty, vendor advisories)

- Severity classification (CVSS, SSVC, or organizational risk-based approach)

- Remediation SLAs by severity (Critical: 24–72 hours, High: 7–14 days, Medium: 30–60 days, Low: 90 days or next release)

- Exception/risk acceptance process and authority

- Vulnerability tracking and reporting

- Metrics and KPIs (mean time to remediate, vulnerability density, SLA compliance)

- Coordination with AI tool vendors for AI-specific vulnerabilities

Area 5: Security of Third-Party Code

Third-party code governance must address:

- Open-source software evaluation criteria (license, maintenance activity, vulnerability history, community size)

- Approved and prohibited license list

- SCA tooling requirements and integration points

- Dependency update cadence and process

- Vendor security assessment for commercial components

- SBOM generation and maintenance requirements

- AI-generated dependency validation (hallucinated packages — “slopsquatting” risk)

- Transitive dependency management

Area 6: Application Security Testing Procedures

Testing procedures must define:

- Testing types required by application risk tier

- Tool standards (approved SAST, DAST, SCA, IAST tools)

- Testing cadence (continuous in CI, periodic manual, annual pen test)

- Results management (triage, false positive handling, suppression governance)

- Quality gates and release blocking criteria

- Penetration testing scope, methodology, and provider requirements

- Testing of AI-augmented features (prompt injection testing, output validation, model behavior testing)

Annual Review Cycle

CIS 16.1 mandates review and update of the documented process:

- Annually at minimum, or

- When significant enterprise changes occur, including: major technology stack changes, organizational restructuring, significant incident findings, new regulatory requirements, adoption of new AI development tools

The review should include an assessment of effectiveness (Are vulnerabilities decreasing? Are SLAs being met?) and a gap analysis against current frameworks and threats.

4. Major SSDLC Frameworks

NIST SP 800-218 — Secure Software Development Framework (SSDF) v1.1

The SSDF provides a core set of high-level secure software development practices organized into four practice groups:

Prepare the Organization (PO)

Practices focused on ensuring the organization, its people, and its processes are prepared to perform secure software development:

- PO.1: Define security requirements for software development

- PO.2: Implement roles and responsibilities

- PO.3: Implement supporting toolchains

- PO.4: Define and use criteria for software security checks

- PO.5: Implement and maintain secure environments for development

Protect the Software (PS)

Practices focused on protecting all components of the software from tampering and unauthorized access:

- PS.1: Protect all forms of code from unauthorized access and tampering

- PS.2: Provide a mechanism for verifying software release integrity

- PS.3: Archive and protect each software release

Produce Well-Secured Software (PW)

Practices focused on producing well-secured software with minimal vulnerabilities:

- PW.1: Design software to meet security requirements and mitigate security risks

- PW.2: Review software design to verify compliance with security requirements and risk information

- PW.3: Reuse existing, well-secured software when feasible instead of duplicating functionality

- PW.4: Review software for security-relevant issues using human review and/or automated analysis

- PW.5: Use compilers, interpreters, and build tools that help mitigate security vulnerabilities

- PW.6: Configure the compilation, interpreter, and build processes to improve executable security

- PW.7: Review and/or analyze human-readable code to identify vulnerabilities and verify compliance with security requirements

- PW.8: Test executable code to identify vulnerabilities and verify compliance with security requirements

- PW.9: Configure software to have secure settings by default

Respond to Vulnerabilities (RV)

Practices focused on identifying residual vulnerabilities in software releases and responding appropriately:

- RV.1: Identify and confirm vulnerabilities on an ongoing basis

- RV.2: Assess, prioritize, and remediate vulnerabilities

- RV.3: Analyze vulnerabilities to identify their root causes

The SSDF is technology-agnostic and methodology-agnostic. It does not prescribe specific tools or processes — it defines outcomes that any secure development process must achieve. This makes it an excellent reference framework for mapping organizational processes to federal requirements.

OWASP Software Assurance Maturity Model (SAMM) v2.0

OWASP SAMM organizes software security practices into five business functions, each containing three security practices (15 total), each with two activity streams:

| Business Function | Security Practice 1 | Security Practice 2 | Security Practice 3 |

|---|---|---|---|

| Governance | Strategy & Metrics | Policy & Compliance | Education & Guidance |

| Design | Threat Assessment | Security Requirements | Security Architecture |

| Implementation | Secure Build | Secure Deployment | Defect Management |

| Verification | Architecture Assessment | Requirements-Driven Testing | Security Testing |

| Operations | Incident Management | Environment Management | Operational Management |

Each practice has three maturity levels (1–3), allowing organizations to benchmark their current state and plan improvement roadmaps. SAMM’s strength is its assessment model — it provides concrete, measurable criteria for each maturity level, enabling objective measurement of security program progress.

Microsoft Security Development Lifecycle (SDL)

Microsoft’s SDL, refined over two decades of practice, defines 10 core practices:

- Provide Training: All team members receive appropriate security training

- Define Security Requirements: Establish security and privacy requirements early

- Define Metrics and Compliance Reporting: Define minimum acceptable security quality levels

- Perform Threat Modeling: Apply structured threat analysis to all significant features

- Establish Design Requirements: Define and publish secure design specifications

- Define and Use Cryptography Standards: Establish encryption standards and review implementation

- Manage the Security Risk of Using Third-Party Components: Maintain inventory and vulnerability awareness

- Use Approved Tools: Define and enforce approved toolchains (compilers, linkers, analyzers)

- Perform Static Analysis Security Testing: Require SAST on all code

- Perform Dynamic Analysis Security Testing: Require DAST / runtime verification

Microsoft publicly attributes significant reduction in vulnerabilities across its product line to SDL adoption. The SDL is particularly influential because it originated from a shipping software company, not an academic or standards body — it was born from production necessity.

Building Security In Maturity Model (BSIMM)

BSIMM is unique among these frameworks: it is descriptive, not prescriptive. Rather than defining what organizations should do, BSIMM measures what organizations actually do by conducting detailed assessments of real software security initiatives across hundreds of firms.

BSIMM organizes findings into four domains:

- Governance: Strategy, metrics, compliance, training, policy

- Intelligence: Attack models, security features, standards, threat assessment

- SSDL Touchpoints: Architecture analysis, code review, security testing

- Deployment: Penetration testing, software environment, configuration management, vulnerability management

Each domain contains multiple practices, and each practice has three maturity levels. BSIMM’s value is benchmarking — it tells you how your program compares to peers in your industry and at your scale.

CISA Secure by Design

CISA’s Secure by Design initiative defines three core principles:

- Take Ownership of Customer Security Outcomes: Manufacturers must own the security of their products, not shift responsibility to customers through configuration guides and patches

- Embrace Radical Transparency and Accountability: Publish CVE data, maintain vulnerability disclosure programs, be transparent about security practices

- Build Organizational Structure and Leadership to Achieve These Goals: Security must be a board-level concern with executive accountability

The Secure by Design Pledge identifies seven concrete goals:

- Increase use of multi-factor authentication

- Reduce default passwords

- Reduce entire classes of vulnerabilities (memory safety, SQL injection, XSS)

- Increase customer patching adoption

- Publish a vulnerability disclosure policy

- Publish CVEs with CWE and CPE data

- Provide evidence of intrusions to customers

As of early 2025, over 250 companies have signed the pledge. CISA’s approach is notable for its focus on eliminating vulnerability classes through language and framework choices rather than finding and fixing individual instances.

SAFECode Fundamental Practices

The Software Assurance Forum for Excellence in Code (SAFECode) brings together major technology companies to publish consensus secure development guidance. Their fundamental practices include:

- Security requirements definition

- Threat modeling and design review

- Secure coding practices (language-specific guidance)

- Security testing (SAST, DAST, fuzz testing, pen testing)

- Vulnerability management and response

- Code review practices

- Third-party component management

- Supply chain integrity

- Training and awareness

SAFECode’s publications are particularly valuable for their practicality — they represent what companies like Adobe, Intel, Microsoft, SAP, and Siemens actually implement, validated by real-world experience at scale.

5. Building the Governance Document Set

A mature SSDLC program requires at minimum 10 governance documents. Each must be formally owned, reviewed on a defined cadence, and accessible to all relevant personnel:

Document 1: Secure Development Policy

The umbrella policy that establishes the organization’s commitment to secure development, defines scope, assigns accountability, and references all subordinate documents. This is the document executives sign and auditors request first.

Key contents: Purpose, scope, roles and responsibilities, policy statements, exceptions process, enforcement, references to all subordinate documents.

Document 2: Coding Standard

Language-specific and framework-specific secure coding requirements. Must be actionable by developers — not abstract principles but concrete rules with code examples of correct and incorrect patterns.

Key contents: Language-specific rules, input validation, output encoding, authentication patterns, session management, cryptography usage, error handling, logging, AI-generated code requirements.

Document 3: Code Review Policy

Defines when code review is required, who may approve, minimum review criteria, security-specific review checklist items, and documentation requirements.

Key contents: Review triggers, reviewer qualifications, security checklist, AI-generated code review requirements (all AI-assisted code must be reviewed by a human who understands it), approval criteria, documentation.

Document 4: Vulnerability Management Policy

Governs the intake, triage, prioritization, remediation, and tracking of all security vulnerabilities across the application portfolio.

Key contents: Vulnerability sources, severity classification, remediation SLAs, exception process, metrics, reporting cadence, integration with ticketing/tracking systems.

Document 5: Change Management Policy

Controls how changes to production systems are proposed, reviewed, approved, implemented, and validated. Must include security review as a gate.

Key contents: Change categories, approval workflows, security review requirements, emergency change process, rollback procedures, post-implementation verification.

Document 6: Release Management Policy

Defines the process for promoting code from development through staging to production, including all required security gates.

Key contents: Environment promotion criteria, security testing requirements per environment, release approval authority, release documentation, rollback criteria.

Document 7: Incident Response Plan (IRP)

Specifically for application security incidents — different from infrastructure IRP in scope and procedures, though they should integrate.

Key contents: Incident classification, notification procedures, containment strategies (application-specific: feature flags, WAF rules, rollback), investigation procedures, communication plan, post-incident review.

Document 8: Third-Party / Open-Source Software (OSS) Policy

Governs the evaluation, approval, integration, monitoring, and retirement of third-party and open-source components.

Key contents: Evaluation criteria (security, license, maintenance), approved/prohibited license list, SCA tooling requirements, update cadence, SBOM requirements, vendor security assessment process.

Document 9: Data Classification Policy

Defines data sensitivity levels and the handling requirements for each level. This document directly informs what data may be processed by AI tools.

Key contents: Classification levels (Public, Internal, Confidential, Restricted), handling requirements per level, labeling requirements, AI tool data restrictions per level, examples.

Document 10: Access Control Policy

Defines how access to development systems, source code, CI/CD pipelines, production environments, and AI development tools is granted, reviewed, and revoked.

Key contents: Least privilege requirements, access request/approval process, periodic access review cadence, separation of duties, privileged access management, AI tool access provisioning and deprovisioning.

6. AI Acceptable Use Policies

The rapid adoption of AI coding assistants has created an urgent governance gap in most organizations. The statistics are alarming:

- 98% of organizations report some level of unsanctioned AI tool use

- Only 37% have formal AI governance policies in place

- 68% of employees admit to using unauthorized AI tools for work

- 79% of engineering teams have at least one member using AI tools without formal approval

- When approved tools are provided with clear policies, unauthorized AI use drops by 89%

The lesson is clear: prohibition does not work. Governance through enablement does.

Three-Tier Tool Classification

An effective AI tool governance model classifies tools into three tiers based on their security controls, data handling, and organizational risk:

Tier 1: Fully Approved

Tools that meet all organizational security requirements and are authorized for use with all non-restricted data classifications.

Characteristics:

- Enterprise license with SSO/SAML integration

- Data retention controls (zero retention or organization-controlled retention)

- Audit logging available to the organization

- Content exclusion / context filtering capabilities

- Compliance certifications relevant to the organization (SOC 2, ISO 27001)

- Contractual data processing agreements in place

- IP indemnification provisions

Examples: GitHub Copilot Enterprise (with SSO, audit logging, content exclusions configured), Claude for Enterprise (with SSO, zero retention), internally hosted models

Permitted use: All projects, all non-restricted data classifications, production code

Tier 2: Limited Use

Tools that provide some security controls but do not meet full enterprise requirements. Authorized for specific use cases with guardrails.

Characteristics:

- Professional/team license with some access controls

- Some data handling guarantees (e.g., no training on user data)

- Limited or no audit logging

- Limited content exclusion capabilities

- May lack enterprise compliance certifications

Permitted use: Non-sensitive projects only, public/internal data classification only, prototyping and learning, must not be used with proprietary algorithms, customer data, or security-sensitive code

Tier 3: Prohibited

Tools that do not meet minimum security requirements for any work-related use.

Characteristics:

- Free tier or consumer plans

- Data may be used for model training

- No organizational access controls

- No audit logging

- No data processing agreements

- No content exclusion capabilities

Examples: Free-tier ChatGPT for code generation, unvetted browser extensions with AI features, unofficial API wrappers

Permitted use: None for work-related tasks. Personal learning on personal devices with no work data is outside organizational scope.

Shadow AI Governance

Shadow AI — the use of unauthorized AI tools by employees — is the AI equivalent of shadow IT, but with higher velocity and greater data exposure risk. An employee pasting proprietary source code into a free-tier AI chat to “get help with a bug” has potentially exposed that code to the AI provider, their training pipeline, and anyone who can extract it.

Detection methods:

- SaaS discovery platforms that identify AI service usage through SSO logs, DNS queries, and browser telemetry

- Browser extension auditing through endpoint management

- Endpoint DLP solutions that monitor clipboard operations and browser form submissions

- API call pattern analysis at the network perimeter (detecting calls to known AI API endpoints)

- Cloud Access Security Brokers (CASB) with AI service categorization

- Periodic self-attestation surveys (trust but verify)

Mitigation strategy:

- Provide approved tools (Tier 1) that meet developer needs

- Communicate clearly why policies exist (data protection, not productivity restriction)

- Make approved tools easy to access and use

- Monitor for policy violations with proportionate response

- Regularly reassess tool classifications as capabilities and contracts evolve

7. Framework Cross-Reference Table

| Topic | CIS v8 | NIST SSDF | OWASP SAMM | MS SDL | BSIMM | CISA SbD | SAFECode |

|---|---|---|---|---|---|---|---|

| Process Documentation | 16.1 | PO.1 | G-SM | Practice 1 | G-SM | Principle 3 | Governance |

| Design Standards | 16.1 | PW.1 | D-SA | Practice 5 | I-SD | Principle 1 | Design Review |

| Coding Practices | 16.1 | PW.5, PW.6 | I-SB | Practice 8 | ST-CR | Goal 3 | Coding Practices |

| Training | 16.1 | PO.2 | G-EG | Practice 1 | G-T | Principle 3 | Training |

| Vuln Management | 16.1 | RV.1, RV.2 | I-DM | Practice 10 | D-VM | Goal 5, 6 | Vuln Mgmt |

| Third-Party Code | 16.1 | PW.3 | I-SB | Practice 7 | I-SS | Goal 3 | Third-Party |

| Security Testing | 16.1 | PW.7, PW.8 | V-ST | Practice 9, 10 | ST-PT | Goal 3 | Testing |

| Annual Review | 16.1 | PO.1 | G-SM | All | G-CP | Principle 3 | Governance |

8. Annual Review and Continuous Improvement

The SSDLC is not a “set and forget” program. It requires continuous measurement, review, and improvement:

Quarterly Activities

- Review vulnerability metrics (density trends, SLA compliance, MTTR)

- Review security testing coverage metrics

- Review training completion rates

- Assess new AI tool requests and reclassify existing tools

- Review security champion effectiveness

- Triage policy exception backlog

Annual Activities

- Full policy document review and update

- Framework alignment assessment (have CIS, NIST, or OWASP published updates?)

- Threat landscape update (new vulnerability classes, new attack techniques)

- Tool effectiveness evaluation (are current tools catching real vulnerabilities?)

- Training program content refresh (new threats, new tools, new policies)

- Maturity model assessment (SAMM or BSIMM) to measure progress

- AI tool landscape reassessment (new tools, changed capabilities, changed data handling)

- Regulatory change impact assessment

Continuous Improvement Metrics

| Metric | Target Direction | Measurement Source |

|---|---|---|

| Vulnerability density (per KLOC) | Decreasing | SAST/DAST aggregation |

| Mean time to remediate (MTTR) | Decreasing | Vulnerability tracker |

| SLA compliance rate | Increasing | Vulnerability tracker |

| Security training completion | >95% | LMS |

| Code review coverage | 100% | SCM / PR data |

| SAST coverage (repos scanned) | 100% | CI/CD pipeline data |

| SCA coverage (repos scanned) | 100% | CI/CD pipeline data |

| Critical/high findings in production | Decreasing | Production scan data |

| AI policy compliance rate | >95% | CASB / audit data |

| Shadow AI detection incidents | Decreasing | SaaS discovery |

Summary

CIS Safeguard 16.1 is the foundation upon which all other CG16 safeguards are built. Without a documented, maintained, and enforced secure development process, individual security activities are ad hoc and inconsistent. The key takeaways:

- SSDLC is economics: Finding and fixing security issues early is orders of magnitude cheaper than finding them in production or after a breach

- Every phase has security activities: From requirements through retirement, security is never “someone else’s job later”

- Six areas must be documented: Design standards, coding practices, training, vulnerability management, third-party code, and security testing

- Frameworks provide structure: NIST SSDF, OWASP SAMM, Microsoft SDL, BSIMM, CISA Secure by Design, and SAFECode all provide complementary perspectives

- Ten governance documents form the foundation: Each must be owned, maintained, and accessible

- AI governance is not optional: With 98% unsanctioned use, the question is not whether to govern AI tools but how quickly you can establish effective governance

- Annual review is mandatory: The threat landscape, tool landscape, and regulatory landscape all evolve — your SSDLC must evolve with them

Assessment Questions

-

A security vulnerability is found during penetration testing that would have cost $1,000 to fix during design. What is the approximate cost to fix it now, and what does this illustrate about shift-left?

-

List the six areas that CIS Safeguard 16.1 requires to be documented. For each, provide one specific element that must be included.

-

Your organization wants to adopt an AI coding assistant. Using the three-tier classification model, what criteria would place a tool in Tier 1 (Fully Approved) vs. Tier 3 (Prohibited)?

-

Compare and contrast OWASP SAMM and BSIMM. How are they similar? How do they fundamentally differ in approach?

-

A developer is using a free-tier AI chatbot to help debug production code that handles PII. What policies are being violated, what are the risks, and how would you remediate this situation?

References

- CIS Controls v8, Safeguard 16.1

- NIST SP 800-218: Secure Software Development Framework (SSDF) v1.1

- OWASP Software Assurance Maturity Model (SAMM) v2.0

- Microsoft Security Development Lifecycle (SDL)

- BSIMM14 Report

- CISA Secure by Design Whitepaper and Pledge

- SAFECode Fundamental Practices for Secure Software Development, Third Edition

- IBM Systems Sciences Institute: Relative Cost of Fixing Defects

- NIST SP 800-160 Vol. 1: Systems Security Engineering

Study Guide

Key Takeaways

- SSDLC is an economic imperative — Security defects cost exponentially more to fix the later they are discovered, from 1x at requirements to 640x+ post-breach.

- Shift-left does not mean shift-only-left — Security activities must exist at every phase, creating defense in depth within the development process itself.

- CIS 16.1 requires six documented areas — Design standards, coding practices, training, vulnerability management, third-party code, and security testing procedures.

- Ten governance documents form the foundation — From Secure Development Policy to Access Control Policy, each must be owned, reviewed, and accessible.

- Frameworks provide complementary perspectives — NIST SSDF defines outcomes, OWASP SAMM measures maturity, BSIMM benchmarks against peers, and CISA Secure by Design eliminates vulnerability classes.

- AI governance is urgent — With 98% of organizations reporting unsanctioned AI use and only 37% having formal policies, the three-tier tool classification model is essential.

- Annual review is mandatory — Threat landscape, tool landscape, and regulatory landscape all evolve; your SSDLC must evolve with them.

Important Definitions

| Term | Definition |

|---|---|

| SSDLC | Secure Software Development Lifecycle — integrating security into every phase of software development |

| Shift-Left | Moving security activities as early as possible in the development lifecycle |

| CIS 16.1 | CIS Controls safeguard requiring a documented secure application development process covering six areas |

| NIST SSDF | Secure Software Development Framework — four practice groups: Prepare, Protect, Produce, Respond |

| OWASP SAMM | Software Assurance Maturity Model — five business functions with three maturity levels each |

| BSIMM | Building Security In Maturity Model — descriptive (not prescriptive) framework measuring what organizations actually do |

| Shadow AI | Use of unauthorized AI tools by employees without organizational approval |

| Three-Tier Classification | AI tool governance model: Tier 1 (Fully Approved), Tier 2 (Limited Use), Tier 3 (Prohibited) |

Quick Reference

- Framework/Process: CIS 16.1 mandates six documented areas with annual review; NIST SSDF provides four practice groups (PO, PS, PW, RV)

- Key Numbers: 640x+ cost multiplier for post-breach defects; 98% unsanctioned AI use; 89% reduction when approved tools provided; 10 governance documents minimum

- Common Pitfalls: Treating security as a bolt-on phase rather than embedded discipline; relying on generic training instead of role-specific; ignoring AI tool governance; assuming “the framework handles security”

Review Questions

- Why is BSIMM described as “descriptive, not prescriptive,” and how does that differ from NIST SSDF?

- How do the seven SDLC phases each contribute to defense in depth within the development process?

- What distinguishes a Tier 1 AI tool from a Tier 3 tool, and why does prohibition fail as a governance strategy?

- If your organization has no SSDLC program today, which of the ten governance documents would you create first and why?

- How would you measure continuous improvement in an SSDLC program using the metrics table provided?