7.1 — Vulnerability Management Program

Listen instead

Learning Objectives

- ✓ Design and implement a complete vulnerability management lifecycle from discovery through closure and lessons learned.

- ✓ Establish an external vulnerability reporting mechanism with appropriate SLAs, safe harbor provisions, and responsible party designation.

- ✓ Apply severity rating systems (CVSS 4.0, EPSS, organizational severity) to prioritize remediation effectively.

- ✓ Conduct root cause analysis that identifies systemic patterns and feeds improvements back into the SDLC.

- ✓ Evaluate and deploy AI-powered vulnerability management tools to handle the scale of modern vulnerability landscapes.

- ✓ Build executive-level dashboards and metrics that demonstrate vulnerability management program effectiveness.

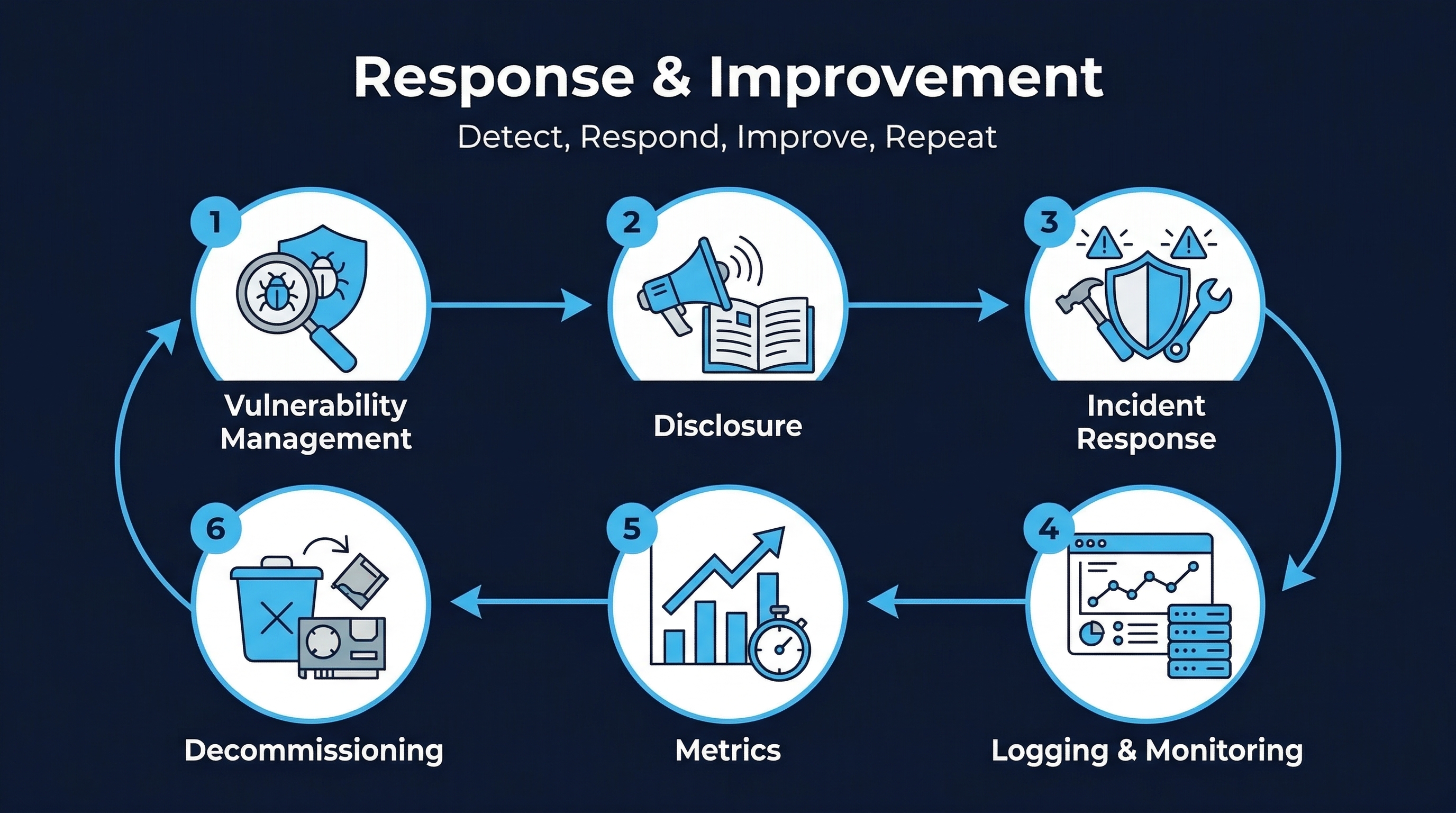

Figure: Response & Improvement Overview — Track 7 coverage of vulnerability management, incident response, and continuous improvement

Figure: Response & Improvement Overview — Track 7 coverage of vulnerability management, incident response, and continuous improvement

1. The Scale of the Problem

Vulnerability management is no longer a task that can be handled manually or through periodic review cycles. The numbers tell a stark story:

- 2024: Over 40,000 CVEs published. This was considered a record.

- 2025: Approximately 47,000 CVEs published, a 17% year-over-year increase.

- 2026 projection: Approximately 59,000 CVEs expected, continuing the exponential growth curve.

- 2028 projection: Approaching 193,000 CVEs annually at current growth rates.

A mid-sized enterprise managing 50,000 to 150,000 known vulnerabilities across its attack surface cannot triage, prioritize, and remediate at this volume using spreadsheets and weekly meetings. The vulnerability management program must be systematic, automated, and risk-informed.

CIS Control 16.2 — Vulnerability Reporting and Response Process

CIS 16.2 requires organizations to:

- Establish a process to accept and address software vulnerability reports.

- Provide an external reporting mechanism (e.g., a published security contact or vulnerability disclosure policy).

- Designate a named responsible party for intake, assignment, and tracking.

- Define severity ratings and timing metrics for response.

- Review the process annually and update as necessary.

CIS Control 16.3 — Root Cause and Corrective Action

CIS 16.3 requires:

- Performing root cause analysis on vulnerabilities to identify patterns beyond individual bugs.

- Categorizing vulnerabilities by type (e.g., CWE classification).

- Feeding lessons learned back into the SDLC to prevent recurrence.

CIS Control 16.6 — Severity Rating and Prioritization

CIS 16.6 requires:

- A defined severity rating system for vulnerability prioritization.

- Criteria for remediation order based on risk and exploitability.

- Minimum security acceptability criteria for release.

- Annual review and update of the rating system.

2. The Vulnerability Management Lifecycle

A mature vulnerability management program follows seven distinct phases. Each phase has specific inputs, activities, outputs, and responsible parties.

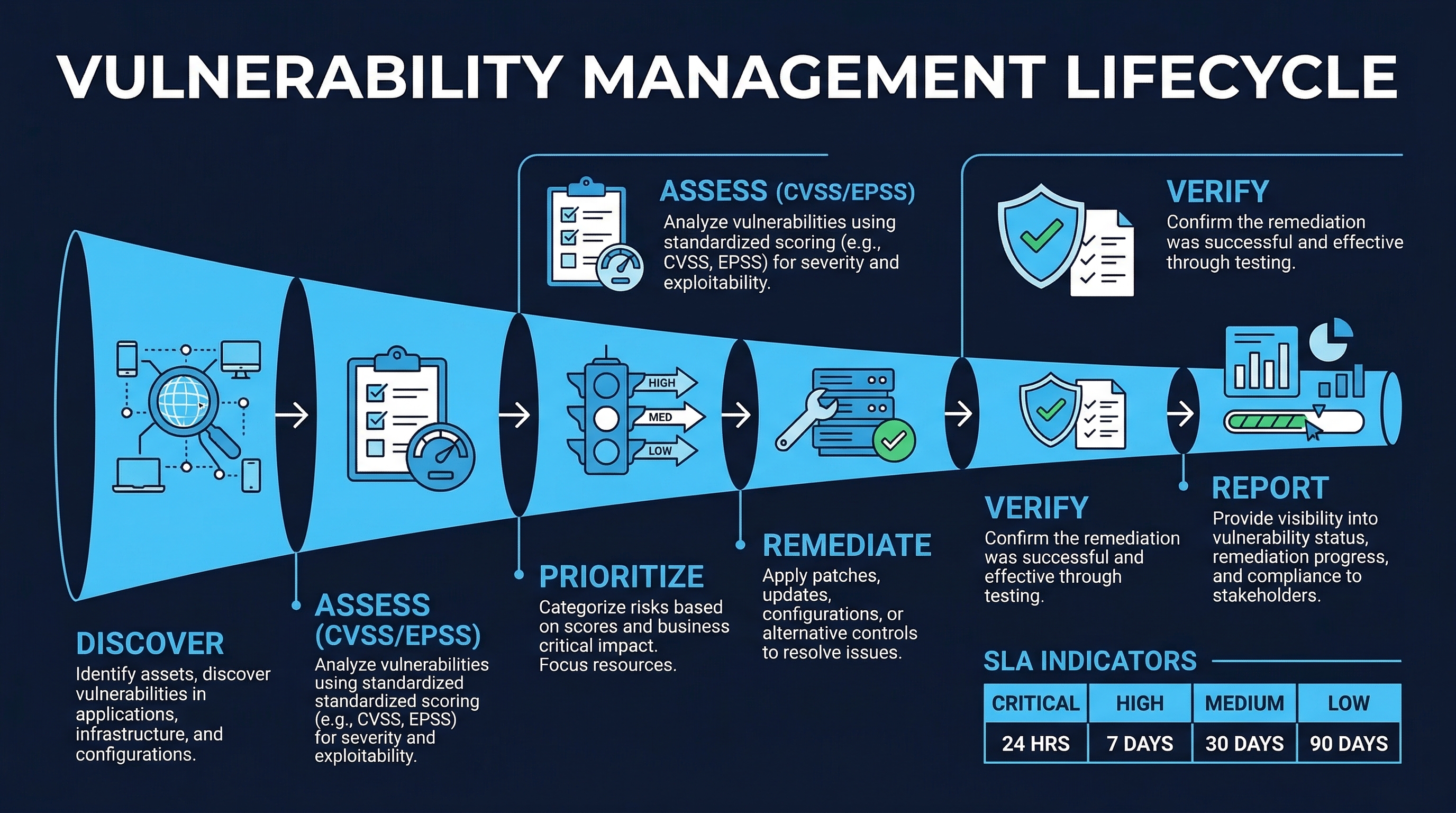

Figure: Vulnerability Management Lifecycle — Seven phases from discovery through lessons learned

Figure: Vulnerability Management Lifecycle — Seven phases from discovery through lessons learned

Phase 1: Discovery

Discovery is the process of identifying vulnerabilities across the application portfolio. Sources include:

Automated scanning:

- SAST (Static Application Security Testing): Analyzes source code for security flaws during development. Tools: Semgrep, SonarQube, Checkmarx, CodeQL. Run in CI/CD on every pull request.

- DAST (Dynamic Application Security Testing): Tests running applications for exploitable vulnerabilities. Tools: OWASP ZAP, Burp Suite, Qualys WAS. Run against staging/production environments.

- SCA (Software Composition Analysis): Identifies known vulnerabilities in third-party dependencies. Tools: Snyk, Dependabot, Mend, OWASP Dependency-Check. Run on every build.

- IAST (Interactive Application Security Testing): Monitors application behavior during testing. Tools: Contrast Security, HCL AppScan. Run during QA testing.

- Container scanning: Scans container images for OS and application vulnerabilities. Tools: Trivy, Grype, Prisma Cloud.

Manual assessment:

- Penetration testing: Authorized simulated attacks performed by skilled security professionals. Typically quarterly or annually for critical applications.

- Code review: Manual security-focused code review of high-risk changes.

- Architecture review: Assessment of design-level security weaknesses.

External reporting:

- Bug bounty programs: Paid vulnerability discovery through platforms like HackerOne or Bugcrowd.

- Vulnerability disclosure policy (VDP) submissions: Unpaid, good-faith reports from security researchers.

- Vendor advisories: Notifications from software vendors about vulnerabilities in their products.

- CERT/CC notifications: Coordinated disclosure through CERT/CC or national CERTs.

- Customer reports: Vulnerability reports from customers or partners.

Threat intelligence:

- CVE feeds: NVD, MITRE CVE, vendor-specific feeds.

- CISA KEV (Known Exploited Vulnerabilities): Actively exploited vulnerabilities requiring urgent attention.

- Dark web monitoring: Intelligence on vulnerabilities being traded or discussed in underground forums.

Phase 2: Triage

Triage is the process of evaluating and classifying each discovered vulnerability to determine its priority. This is the phase where most organizations fail at scale — and where AI provides the greatest leverage.

Severity classification: Apply the organization’s severity rating system (see Section 4) to assign an initial severity level. This considers:

- Technical severity: How dangerous is the vulnerability from a purely technical standpoint? CVSS 4.0 base score provides this.

- Exploitability: How likely is this vulnerability to be exploited in the wild? EPSS (Exploit Prediction Scoring System) provides a probability of exploitation within 30 days.

- Business impact: What would happen if this vulnerability were exploited? Consider data sensitivity, regulatory exposure, financial impact, and reputational damage.

- Environmental factors: Is the vulnerable component internet-facing? Is it behind compensating controls? What is the blast radius?

Deduplication: Multiple scanners often report the same vulnerability. Triage must identify and consolidate duplicates. A single SQL injection finding reported by SAST, DAST, and a pen tester is one vulnerability, not three.

Validation: Automated scanners produce false positives. Triage should validate findings before routing them for remediation, particularly for high-severity items. Validation methods include:

- Confirming the vulnerability exists in the current codebase (not dead code).

- Verifying the vulnerable component is reachable from the attack surface.

- Checking whether existing controls mitigate the risk.

- Attempting exploitation in a safe environment.

Phase 3: Assignment

Once triaged, each vulnerability must be assigned to the team and individual responsible for remediation. Assignment considers:

- Ownership mapping: Which team owns the affected component? Use a service catalog or CMDB.

- Expertise: Does the remediation require specialized knowledge (e.g., cryptography, infrastructure)?

- Capacity: Is the assigned team already overloaded with security debt?

- SLA clock start: The SLA timer begins when the vulnerability is assigned, not when it was discovered.

Assignment must be tracked in a centralized system with clear ownership, not in email or chat messages that can be lost.

Phase 4: Remediation

Remediation is the process of eliminating or mitigating the vulnerability. Options include:

- Fix: Modify the code to eliminate the vulnerability entirely. This is the preferred option.

- Mitigate: Apply compensating controls (WAF rules, network segmentation, input validation) that reduce the risk without fixing the root cause. Mitigations are acceptable as interim measures but should not replace proper fixes.

- Accept: Formally document that the organization accepts the residual risk. Risk acceptance requires documented justification, approval from an appropriate authority (not the development team), compensating controls, and a review date. See Section 7 for the exception process.

- Transfer: Transfer the risk through insurance or contractual mechanisms. Rarely applicable to code-level vulnerabilities.

Remediation must include:

- The fix itself, developed following standard secure coding practices.

- Code review of the fix, with security review for high-severity items.

- Testing to verify the fix resolves the vulnerability.

- Regression testing to verify the fix does not break existing functionality.

Phase 5: Verification

Verification confirms that the remediation actually resolves the vulnerability without introducing new issues.

- Rescan: Run the same scanner that originally discovered the vulnerability to confirm it is no longer reported.

- Manual verification: For high-severity vulnerabilities, have a security team member manually verify the fix.

- Regression testing: Run the application’s test suite to ensure no functionality is broken.

- Penetration retest: For critical vulnerabilities discovered during pen testing, include a retest in the next engagement.

Verification must be performed by someone other than the person who developed the fix. This separation of duties prevents the “I tested my own code and it works” failure mode.

Phase 6: Closure

Closure documents the resolution and updates all tracking systems.

- Update the vulnerability status to “Resolved” or “Closed” in the tracking system.

- Record the remediation method (fix, mitigate, accept).

- Record the verification method and results.

- Record the dates: discovered, triaged, assigned, remediated, verified, closed.

- Calculate SLA compliance: was the vulnerability resolved within the required timeframe?

- Update any related advisories or customer communications.

Phase 7: Lessons Learned

This phase implements CIS 16.3 — root cause analysis and corrective action. It is the most frequently skipped phase and arguably the most valuable.

For every vulnerability (or at minimum, every high and critical vulnerability), ask:

- Why did this vulnerability exist? What was the root cause?

- Why wasn’t it caught earlier? Which controls failed to detect it?

- Is this an isolated instance or part of a pattern? Are there similar vulnerabilities elsewhere?

- What systemic change would prevent recurrence? Not just fixing this instance, but fixing the class of vulnerability.

Detailed root cause analysis techniques are covered in Section 5.

3. External Vulnerability Reporting

CIS 16.2 requires an external reporting mechanism. This means publishing a vulnerability disclosure policy (VDP) that allows anyone — security researchers, customers, partners, or members of the public — to report vulnerabilities they discover.

Published Vulnerability Disclosure Policy (VDP)

Every organization that develops software must have a published VDP. CISA’s Secure by Design initiative (Goal 5) makes this an explicit expectation for software manufacturers.

A VDP must include:

Scope:

- Which products, services, and systems are in scope for vulnerability reports.

- Clearly state what is out of scope (e.g., third-party services, social engineering, physical security).

Secure reporting channel:

- Encrypted email address (e.g., security@company.com with PGP/GPG key published).

- Dedicated web form over HTTPS.

- Bug bounty platform (HackerOne, Bugcrowd) if applicable.

- The channel must support confidential communication.

Expected report content:

- Description of the vulnerability.

- Steps to reproduce.

- Potential impact.

- Any proof-of-concept code or screenshots.

- Reporter’s contact information for follow-up.

Safe harbor provisions:

- Legal protections for good-faith security researchers.

- Statement that the organization will not pursue legal action against researchers who follow the policy.

- Statement that the organization considers good-faith security research to be authorized activity.

- Reference to the DOJ’s 2022 CFAA policy on good-faith security research.

Response timeline commitments:

- Acknowledgment: within 5 business days of report submission.

- Initial triage: within 10 business days.

- Status updates: at least monthly until resolution.

- Target fix timeline: varies by severity (see organizational SLAs).

- Disclosure timeline: typically 90 days from report, following the Google Project Zero standard.

Recognition:

- How researchers will be credited (hall of fame, advisory acknowledgment).

- Whether a bug bounty is offered and general reward ranges.

Named Responsible Party

CIS 16.2 requires a named responsible party for vulnerability report intake. This is typically:

- The Product Security team lead.

- The CISO or a designated security manager.

- A rotating on-call security contact.

The named party is accountable for ensuring reports are acknowledged, triaged, assigned, and tracked through resolution. This person is not necessarily the one who fixes the vulnerability — they ensure the process functions correctly.

4. Severity Rating Systems

CIS 16.6 requires a defined severity rating system. Modern vulnerability management uses multiple scoring systems in combination to achieve risk-based prioritization.

CVSS 4.0 (Common Vulnerability Scoring System)

CVSS 4.0, released in November 2023, introduced significant improvements over CVSS 3.1:

- Base score (0-10): Inherent technical characteristics of the vulnerability. Considers attack vector, attack complexity, privileges required, user interaction, scope, confidentiality/integrity/availability impact.

- Threat score: Adjusts the base score based on current threat intelligence. Replaces the CVSS 3.1 “Temporal” metric group. Considers exploit code maturity.

- Environmental score: Adjusts for the organization’s specific environment. Considers modified base metrics and security requirements (confidentiality, integrity, availability importance).

CVSS 4.0 also introduced:

- Supplemental metric group for additional context (safety, automatable, recovery, value density, vulnerability response effort, provider urgency).

- Removal of the “Scope” metric in favor of separate impact assessments.

- More granular attack requirements.

Limitation: CVSS provides a snapshot of technical severity. It does not predict likelihood of exploitation. A CVSS 9.8 vulnerability in an obscure library that requires local access may be less urgent than a CVSS 7.5 vulnerability in an internet-facing authentication component that is actively exploited.

EPSS (Exploit Prediction Scoring System)

EPSS addresses the key limitation of CVSS by predicting the probability that a vulnerability will be exploited in the wild within the next 30 days. EPSS uses machine learning trained on historical exploitation data, social media discussions, exploit code availability, and other signals.

- EPSS scores range from 0 to 1 (0% to 100% probability).

- The median EPSS score is very low — most vulnerabilities are never exploited.

- EPSS is updated daily, reflecting changes in the threat landscape.

Key insight: Research consistently shows that only 2-7% of published vulnerabilities are ever exploited in the wild. EPSS helps identify which ones.

Organizational Severity Classification

Combining CVSS and EPSS with business context, organizations define their own severity levels with associated remediation SLAs:

| Severity | Criteria | Remediation SLA | Example |

|---|---|---|---|

| Critical | CVSS >= 9.0 AND (EPSS > 0.5 OR actively exploited OR CISA KEV) AND internet-facing | 24 hours | RCE in authentication service |

| High | CVSS >= 7.0 AND (EPSS > 0.1 OR exploitable) OR any actively exploited | 7 days | SQL injection in API endpoint |

| Medium | CVSS >= 4.0 OR EPSS > 0.01 OR limited exploitability | 30 days | XSS requiring authenticated access |

| Low | CVSS < 4.0 AND EPSS < 0.01 AND minimal impact | 90 days | Information disclosure in error message |

Risk-Based vs. Severity-Based Prioritization

Traditional severity-based prioritization (“fix all criticals first, then highs”) is insufficient because:

- It treats all critical vulnerabilities equally regardless of context.

- It ignores exploitability — a critical vulnerability that has never been exploited and has no known exploit code may be less urgent than a high vulnerability with a Metasploit module.

- It ignores business context — a medium vulnerability in a payment processing system may warrant faster remediation than a critical vulnerability in an internal documentation tool.

Risk-based prioritization considers:

- Technical severity (CVSS).

- Exploitation probability (EPSS).

- Asset criticality (business impact if compromised).

- Exposure (internet-facing vs. internal, behind compensating controls).

- Threat intelligence (active exploitation campaigns, CISA KEV listing).

5. Root Cause Analysis

CIS 16.3 requires root cause analysis that goes beyond fixing individual bugs to identify systemic patterns. The goal is to transform vulnerability findings into SDLC improvements that prevent entire classes of vulnerabilities.

5 Whys Technique

The simplest root cause analysis method. Start with the vulnerability and ask “why” repeatedly until you reach a systemic cause.

Example:

- Why was there a SQL injection in the user search API? — Because user input was concatenated directly into a SQL query.

- Why was user input concatenated instead of parameterized? — Because the developer used string formatting instead of the ORM’s query builder.

- Why did the developer use string formatting? — Because the query was complex and the developer didn’t know how to express it using the ORM.

- Why didn’t the developer know? — Because the team’s ORM training doesn’t cover advanced query patterns.

- Why wasn’t this caught in code review? — Because the reviewer didn’t have a security-focused review checklist that includes parameterized query verification.

Systemic fixes identified:

- Update ORM training to cover advanced query patterns.

- Add parameterized query verification to code review checklist.

- Add a SAST rule to flag string-formatted SQL queries.

- Create an approved query helper library for complex queries.

Fault Tree Analysis

For complex vulnerabilities involving multiple contributing factors, fault tree analysis maps the logical relationships between events that led to the vulnerability.

Start with the undesired event (vulnerability in production) at the top, then decompose into contributing causes using AND/OR gates:

- OR gate: Any one of the child events is sufficient to cause the parent event.

- AND gate: All child events must occur together to cause the parent event.

This technique reveals which controls, if strengthened, would have the greatest impact on preventing similar vulnerabilities.

CWE-Based Categorization

Categorize every vulnerability by its CWE (Common Weakness Enumeration) type. Over time, this reveals patterns:

- If 40% of your vulnerabilities are CWE-79 (Cross-site Scripting), invest in output encoding libraries and training.

- If 25% are CWE-502 (Deserialization of Untrusted Data), invest in safe deserialization patterns and architectural changes.

- If dependency vulnerabilities dominate, invest in SCA tooling and dependency management processes.

Track CWE distribution over time. A successful vulnerability management program shows decreasing density of previously common CWE types as systemic fixes take effect.

Pattern Identification Across Vulnerabilities

Beyond individual CWE types, look for patterns across vulnerabilities:

- Team patterns: Does one team produce more vulnerabilities than others? This may indicate a training gap or tooling gap, not individual failure.

- Technology patterns: Do vulnerabilities cluster in a specific technology stack or framework version?

- Timing patterns: Do vulnerabilities increase after major refactors, technology migrations, or staffing changes?

- Phase patterns: Are most vulnerabilities discovered in production (indicating testing gaps) or in development (indicating healthy shift-left)?

Closing the Loop: Feedback into the SDLC

Root cause analysis only has value if it drives change. Each root cause analysis must produce at least one of:

- Training update: New content addressing the identified gap.

- Tooling change: New scanner rule, linter rule, or IDE plugin configuration.

- Process change: Updated code review checklist, new architectural standard, modified threat model.

- Library/framework change: New safe-by-default library, updated framework version, new helper function.

Track these corrective actions to completion. An unimplemented corrective action is worse than no analysis — it represents identified risk that was knowingly ignored.

6. Vulnerability Tracking

Centralized Tracking System

All vulnerabilities must be tracked in a single centralized system. This system must:

- Accept input from all discovery sources: Scanner integrations (SAST, DAST, SCA, container), manual findings (pen tests, code reviews), external reports (VDP, bug bounty).

- Deduplicate findings: The same vulnerability reported by multiple scanners should be tracked as one item.

- Track lifecycle state: New, Triaged, Assigned, In Remediation, Verification, Closed, Risk Accepted.

- Enforce SLA tracking: Automatically calculate time-in-state and SLA compliance.

- Support workflow automation: Auto-assignment based on component ownership, auto-escalation on SLA breach.

Common platforms:

- DefectDojo: Open-source vulnerability management platform. Excellent scanner integration (150+ parsers), deduplication, and reporting.

- ThreadFix: Commercial vulnerability correlation and management platform.

- Jira with security workflows: Common but requires careful configuration to track security-specific metadata.

- Nucleus Security: Cloud-native vulnerability management with risk-based prioritization.

- Vulcan Cyber (now Brinqa): Risk-based vulnerability management with automated remediation.

Metrics and Dashboards

Track and report on these key metrics:

Volume metrics:

- Total open vulnerabilities by severity.

- New vulnerabilities discovered per period (week/month).

- Vulnerabilities closed per period.

- Backlog trend (is it growing or shrinking?).

Time metrics:

- Mean Time to Remediate (MTTR) by severity.

- Mean Time to Detect (MTTD) — time from introduction to discovery.

- Age distribution — how old are open vulnerabilities?

- SLA compliance rate by severity.

Quality metrics:

- False positive rate by scanner and vulnerability type.

- Recurrence rate — same vulnerability type reappearing after fix.

- Escape rate — vulnerabilities reaching production that should have been caught earlier.

Executive dashboard elements:

- Risk posture trend (overall vulnerability risk score over time).

- SLA compliance summary (percentage meeting SLA by severity).

- Top 10 riskiest applications.

- Remediation velocity (vulns closed per sprint/release).

- Comparison to industry benchmarks.

7. Vulnerability Exception Process

Not every vulnerability can or should be fixed immediately. A formal exception process provides a controlled mechanism for accepting risk when remediation is not feasible.

Exception Request Requirements

- Justification: Why can’t this vulnerability be remediated within the SLA? (Technical infeasibility, business constraint, insufficient resources.)

- Business impact analysis: What is the risk of leaving this vulnerability unresolved?

- Compensating controls: What alternative controls reduce the risk? (WAF rules, network segmentation, monitoring, access restrictions.)

- Requested duration: How long is the exception needed? Maximum 90 days for Critical/High, 180 days for Medium/Low.

- Remediation plan: What will be done to eventually resolve the vulnerability, and when?

Approval Authority

| Severity | Approver | Review Cadence |

|---|---|---|

| Critical | CISO or VP Engineering | Every 30 days |

| High | Security Director or Engineering Director | Every 60 days |

| Medium | Security Manager or Engineering Manager | Every 90 days |

| Low | Security Lead or Team Lead | Every 180 days |

Exception Lifecycle

- Request: Developer or team lead submits exception with required documentation.

- Review: Security team evaluates compensating controls and residual risk.

- Approval/Denial: Appropriate authority approves or denies with documented rationale.

- Monitoring: Compensating controls are monitored for effectiveness.

- Renewal or closure: At expiration, the exception must be renewed (with updated justification) or the vulnerability must be remediated.

Exceptions must never become permanent. An exception that has been renewed more than twice should trigger an escalation review.

8. AI-Powered Vulnerability Management

The scale of modern vulnerability management demands AI assistance. Manual triage of 59,000+ CVEs per year, combined with organization-specific findings from SAST, DAST, SCA, and pen testing, is not feasible without automation.

AI-Powered Triage

Microsoft Vuln.AI: Microsoft’s AI-powered vulnerability triage system, deployed internally, reduced triage time by more than 50%. The system analyzes vulnerability reports, correlates them with threat intelligence, assesses exploitability, and recommends severity classifications — allowing human analysts to focus on edge cases and high-severity items.

CrowdStrike ExPRT.AI: CrowdStrike’s ExPRT.AI (Exploit Prediction Rating) uses machine learning to predict which vulnerabilities are most likely to be exploited. Key finding: 95% of remediation effort can be focused on just 5% of the most exploitable vulnerabilities. Organizations using ExPRT.AI report dramatic reduction in remediation workload while maintaining or improving security posture.

Tenable VPR (Vulnerability Priority Rating): Tenable’s research found that only 1.6% of vulnerabilities represent actual business risk in any given environment. Their Vulnerability Priority Rating uses machine learning to identify this critical minority, combining technical severity with exploitation intelligence and environmental context.

EPSS Adoption Impact

Organizations that adopt EPSS-based prioritization consistently report:

- 60-80% reduction in effective remediation workload: By focusing on the 2-7% of vulnerabilities with meaningful exploitation probability, teams can dramatically reduce the volume of vulnerabilities requiring immediate attention.

- 3x less likely to suffer a breach: Research indicates organizations using exposure-based prioritization (EPSS + asset criticality + threat intelligence) are approximately three times less likely to experience a security breach compared to those using CVSS-only prioritization.

- Improved developer satisfaction: Developers are more willing to address vulnerabilities when the prioritization is clearly risk-informed rather than “fix everything the scanner found.”

Agentic AI for Vulnerability Management

The newest frontier is fully agentic AI vulnerability management, where AI systems autonomously execute vulnerability management tasks with human oversight.

Maze (Agentic AI): Maze represents the emerging category of agentic vulnerability management platforms. These systems can:

- Autonomously discover vulnerabilities across the attack surface.

- Correlate findings with threat intelligence in real time.

- Generate remediation recommendations with specific code changes.

- Validate fixes by re-scanning after remediation.

- Escalate to humans only when confidence is low or risk is high.

AI-Automated Remediation

AI can autonomously remediate certain categories of vulnerabilities with minimal human oversight:

Autonomous remediation (low-risk):

- Outdated library updates where the new version is a patch release with no breaking changes.

- Known-safe dependency updates backed by compatibility testing.

- Configuration fixes for well-understood misconfigurations (e.g., missing security headers, permissive CORS).

- Secret rotation for expired or compromised credentials.

Human-supervised remediation (medium-risk):

- Major version dependency updates that may include breaking changes.

- Code-level fixes generated by AI that require human review for correctness and context.

- Infrastructure changes that could affect availability.

Human-required remediation (high-risk):

- Architectural changes.

- Business logic vulnerabilities.

- Vulnerabilities requiring design decisions.

- Fixes in safety-critical or regulated systems.

The key principle: AI reduces the human workload, it does not eliminate human judgment. The goal is to have humans spend their time on the 5-10% of vulnerabilities that genuinely require human expertise, not the 90% that are mechanical and repetitive.

9. Integration Points

Integration with Incident Response (Module 7.3)

When a vulnerability is actively exploited, it transitions from the vulnerability management process to the incident response process. Key integration points:

- Vulnerability tracking system triggers incident response when exploitation is detected.

- Incident response team has read access to vulnerability details and remediation history.

- Post-incident findings feed back into the vulnerability management process.

- MTTR for incident-driven remediation is tracked separately from routine remediation.

Integration with Coordinated Disclosure (Module 7.2)

When vulnerabilities are reported externally, the disclosure process and the vulnerability management process must be coordinated:

- External reports enter the vulnerability management lifecycle at the Triage phase.

- Disclosure timelines (typically 90 days) serve as hard deadlines for remediation.

- Communication with reporters is tracked alongside technical remediation.

- CVE assignment and advisory publication are coordinated with fix deployment.

Integration with CI/CD Pipeline

The vulnerability management program must be integrated with the CI/CD pipeline:

- Scanner findings automatically create entries in the vulnerability tracking system.

- Build policies enforce quality gates based on vulnerability severity.

- Remediation PRs link to vulnerability tracking entries.

- Deployment gates check vulnerability status before promoting to production.

10. Annual Review

CIS 16.2 and 16.6 both require annual review of vulnerability management processes and severity rating systems.

Annual Review Checklist

- Review and update the vulnerability disclosure policy (VDP).

- Review and update severity rating criteria and SLA timelines.

- Analyze the year’s vulnerability metrics: volume trends, MTTR trends, SLA compliance, escape rates.

- Review root cause analysis findings and corrective action completion.

- Assess scanner effectiveness: false positive rates, detection coverage, new tool evaluation.

- Review exception process: volume, approval patterns, exception duration trends.

- Benchmark against industry data (BSIMM, Verizon DBIR, OWASP statistics).

- Update tooling and automation based on lessons learned.

- Verify integration with incident response is functioning.

- Brief executive leadership on program status and resource needs.

Key Takeaways

- Vulnerability management is a lifecycle, not a one-time scan. The seven phases — discovery, triage, assignment, remediation, verification, closure, lessons learned — must all function for the program to be effective.

- External reporting is not optional. CIS 16.2, CISA Secure by Design, and industry best practice require a published vulnerability disclosure policy with safe harbor provisions and defined SLAs.

- Risk-based prioritization dramatically outperforms severity-based prioritization. Combining CVSS with EPSS and business context enables organizations to focus on the 2-7% of vulnerabilities that represent real risk.

- Root cause analysis is where vulnerability management creates lasting value. Fixing individual bugs is necessary; preventing classes of bugs is transformational.

- AI is essential for managing modern vulnerability volumes. With 59,000+ CVEs projected for 2026, organizations that do not leverage AI-powered triage and prioritization will fall further behind.

- The exception process must be formal and time-bound. Risk acceptance without documentation, approval, compensating controls, and expiration is just ignoring the problem.

Practical Exercise

Scenario: Your organization receives the following vulnerability report from a security researcher via your VDP:

“I discovered a Server-Side Request Forgery (SSRF) vulnerability in your API gateway’s webhook configuration endpoint. By manipulating the callback URL parameter, I can make the server send HTTP requests to internal services, including your metadata endpoint at 169.254.169.254. I have confirmed I can retrieve cloud provider IAM credentials. Steps to reproduce are attached.”

Tasks:

- Walk through all seven phases of the vulnerability management lifecycle for this finding.

- Assign a severity level using CVSS 4.0, EPSS, and organizational context (the API gateway is internet-facing, processes financial data, and runs on AWS).

- Draft the acknowledgment response to the researcher.

- Conduct a 5 Whys root cause analysis and identify systemic fixes.

- Determine what compensating controls could be applied while the fix is being developed.

References

- CIS Controls v8, Safeguard 16.2: Software Vulnerability Reporting and Response

- CIS Controls v8, Safeguard 16.3: Root Cause and Corrective Action

- CIS Controls v8, Safeguard 16.6: Severity Rating and Prioritization

- FIRST CVSS v4.0 Specification: https://www.first.org/cvss/v4-0/

- FIRST EPSS: https://www.first.org/epss/

- NIST SSDF RV.1: Identify and Confirm Vulnerabilities on an Ongoing Basis

- CISA Secure by Design Goals 5-6

- OWASP Vulnerability Management Guide

- MITRE CWE: https://cwe.mitre.org/

Study Guide

Key Takeaways

- Vulnerability management is a lifecycle, not a scan — Seven phases: Discovery, Triage, Assignment, Remediation, Verification, Closure, Lessons Learned.

- 59,000 CVEs projected for 2026 — 17% YoY growth; manual triage is no longer feasible without AI assistance.

- Only 2-7% of CVEs are ever exploited — EPSS identifies which ones; organizations using EPSS report 60-80% reduction in remediation workload.

- Root cause analysis creates lasting value — CIS 16.3 requires CWE categorization and feeding lessons back into the SDLC.

- Risk-based beats severity-based prioritization — CVSS 6.5 with EPSS 0.94 is more urgent than CVSS 9.8 with EPSS 0.003.

- AI is essential at modern vulnerability volumes — CrowdStrike ExPRT.AI: 95% of effort focused on 5% most exploitable vulnerabilities.

- Exception process must be formal and time-bound — Max 90 days for Critical/High; documented compensating controls; CISO approval for critical.

Important Definitions

| Term | Definition |

|---|---|

| EPSS | Exploit Prediction Scoring System — ML probability of exploitation within 30 days (0-1) |

| CVSS 4.0 | Common Vulnerability Scoring System v4 with base, threat, and environmental scores |

| CISA KEV | Known Exploited Vulnerabilities catalog requiring urgent remediation |

| CWE | Common Weakness Enumeration — standardized classification of vulnerability types |

| MTTR | Mean Time to Remediate — average time from finding to fix by severity |

| VDP | Vulnerability Disclosure Policy — public document for external vulnerability reporting |

| 5 Whys | Root cause technique asking “why” repeatedly until reaching systemic cause |

| Fault Tree Analysis | Maps logical AND/OR relationships between events leading to vulnerability |

| DefectDojo | Open-source vulnerability management platform with 150+ scanner integrations |

| ExPRT.AI | CrowdStrike’s ML system predicting which 5% of vulnerabilities pose 95% of risk |

Quick Reference

- Remediation SLAs: Critical 24 hours, High 7 days, Medium 30 days, Low 90 days

- Critical Severity Criteria: CVSS >=9.0 AND (EPSS >0.5 OR actively exploited OR CISA KEV) AND internet-facing

- Exception Duration: Critical/High max 90 days, Medium/Low max 180 days; renewed >2x triggers escalation

- CVE Growth: 40K (2024) -> 47K (2025) -> 59K (2026 projected) -> 193K (2028 projected)

- Common Pitfalls: CVSS-only prioritization, skipping lessons learned phase, permanent exceptions without review, manual triage at scale, no CWE tracking

Review Questions

- Walk through all seven lifecycle phases for an SSRF vulnerability reported via VDP that retrieves cloud IAM credentials.

- Explain why CVSS-only prioritization fails and design a multi-factor prioritization model using CVSS, EPSS, and business context.

- Conduct a 5 Whys analysis on a SQL injection in production and identify systemic fixes that prevent the entire class of vulnerability.

- At 59,000+ CVEs projected for 2026, what AI-powered tools and approaches would you deploy for triage and prioritization?

- Design a vulnerability exception process including required documentation, approval authority, duration limits, and renewal criteria.