6.2 — Artifact Integrity & SBOM

Listen instead

Introduction

Software does not exist in isolation. Modern applications are assemblies — composed of first-party code, open-source libraries, commercial SDKs, container base images, configuration templates, and increasingly, AI models and datasets. The integrity of the final product depends on the integrity of every component and the processes that assemble them. A single compromised dependency, a tampered build artifact, or a modified container image can undermine every other security control in the organization.

CIS Control 16.4 requires organizations to establish and manage an inventory of third-party software components. You cannot secure what you do not know about. CIS Control 16.5 requires that those components be up-to-date and from trusted sources — and that they are evaluated for vulnerabilities before use. Together, these controls form the foundation of software supply chain security.

This module covers artifact signing and verification, immutable builds, the SLSA framework for supply chain integrity, the Software Bill of Materials (SBOM) as the inventory mechanism, and the emerging requirement for AI BOMs that extend traditional SBOMs to cover AI components.

CIS Control 16.4: Third-Party Component Inventory

CIS 16.4 establishes the requirement:

- Maintain a complete inventory: Every third-party component (library, framework, SDK, container image, API client) in every application must be cataloged.

- Risk assessment per component: Each component carries risk — the criticality of the vulnerability, the exposure of the consuming application, the maintenance status of the project, the security track record of the maintainers.

- Monthly evaluation: The inventory is not a one-time exercise. Components must be evaluated monthly for new vulnerabilities, end-of-life status, license changes, and maintainer changes.

- Support status tracking: Is the component actively maintained? When was the last release? Are security patches applied promptly? Components approaching or past end-of-life require migration plans.

The SBOM is the mechanism that operationalizes this control. Without automated SBOM generation and continuous monitoring, maintaining an accurate inventory across dozens or hundreds of applications is functionally impossible.

CIS Control 16.5: Up-to-Date and Trusted Components

CIS 16.5 complements the inventory requirement:

- Up-to-date: Components must be current. Not necessarily the bleeding edge, but within a supported version range that receives security patches.

- Trusted sources: Components must come from established, reputable sources — official package registries, verified publishers, signed releases.

- Prefer established frameworks: When choosing between competing libraries, prefer those with larger communities, longer track records, more active maintenance, and established security practices.

- Pre-use vulnerability evaluation: Before adding any new dependency, check it against vulnerability databases (NVD, OSV, GitHub Advisory Database). Do not introduce known-vulnerable components.

Artifact Signing and Verification

Artifact signing cryptographically binds an artifact to its creator, ensuring that what you deploy is exactly what was built, by whom you expect, through the process you trust.

Container Image Signing

Cosign (Sigstore): Cosign is the standard tool for signing container images in the Sigstore ecosystem. It supports both key-based and keyless signing:

# Keyless signing (uses OIDC identity — no key management needed)

cosign sign --yes ghcr.io/myorg/myapp@sha256:abc123...

# Verification

cosign verify --certificate-identity=ci@myorg.com \

--certificate-oidc-issuer=https://token.actions.githubusercontent.com \

ghcr.io/myorg/myapp@sha256:abc123...Keyless signing ties the signature to a verifiable identity (CI platform OIDC token, developer identity) through Fulcio (certificate authority) and records the signing event in Rekor (transparency log). There are no long-lived signing keys to manage, rotate, or protect.

Docker Content Trust (Notary):

Docker’s built-in signing mechanism using The Update Framework (TUF). Enabled by setting DOCKER_CONTENT_TRUST=1. More complex key management than Cosign but deeply integrated into Docker workflows.

Binary and Package Signing

GPG signing: Traditional approach for binaries and releases. Requires key management infrastructure.

Sigstore keyless signing: Extends the same keyless model to arbitrary artifacts:

# Sign any file keylessly

cosign sign-blob --yes --output-signature sig.pem --output-certificate cert.pem myartifact.tar.gz

# Verify

cosign verify-blob --signature sig.pem --certificate cert.pem \

--certificate-identity=ci@myorg.com \

--certificate-oidc-issuer=https://token.actions.githubusercontent.com \

myartifact.tar.gzPackage ecosystem signing:

- npm provenance: npm packages can include SLSA provenance attestations proving they were built from a specific commit by a specific CI system.

- PyPI Trusted Publishers: PyPI supports OIDC-based publishing where packages can only be uploaded by verified CI systems, not by individual developer credentials.

- Maven Central signing: All artifacts on Maven Central must be GPG-signed.

Deployment Verification

Signing is meaningless without verification at deployment time:

- Admission controllers: Kubernetes admission controllers (OPA/Gatekeeper, Kyverno, Sigstore Policy Controller) reject any container image that is not signed by a trusted identity.

- Binary verification: Deployment scripts verify GPG or Cosign signatures before executing downloaded binaries.

- Reject unsigned artifacts: The default posture is denial. Unsigned or invalidly signed artifacts do not deploy.

# Kyverno policy: require Cosign signature

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: verify-image-signatures

spec:

validationFailureAction: Enforce

rules:

- name: verify-cosign-signature

match:

any:

- resources:

kinds:

- Pod

verifyImages:

- imageReferences:

- "ghcr.io/myorg/*"

attestors:

- entries:

- keyless:

subject: "https://github.com/myorg/*"

issuer: "https://token.actions.githubusercontent.com"Immutable Builds

Immutable builds ensure that what is tested is exactly what is deployed. No modifications between build and deployment.

Principles

Build once, promote everywhere: An artifact is built exactly once from a specific commit. That same artifact (same bytes, same hash) is promoted through dev, staging, UAT, and production. It is never rebuilt for each environment.

Content-addressable: Artifacts are identified by their cryptographic hash (SHA-256), not by mutable labels. The hash is the identity. If a single byte changes, the hash changes, and it is a different artifact.

No post-build modification: Once an artifact is built and signed, it is never modified. Adding files, patching binaries, or updating configurations within the artifact is forbidden.

Environment-specific configuration injected at deploy time: The artifact is environment-agnostic. Database URLs, API endpoints, feature flags, and other environment-specific values are injected at deployment through environment variables, ConfigMaps, or secrets managers — never baked into the artifact.

Reproducible builds: Given the same source code, dependencies, and build environment, the build produces bit-for-bit identical output. This allows independent verification of builds. The Reproducible Builds project (reproducible-builds.org) provides tools and standards for achieving this.

# Reproducible build: pinned base image by digest, deterministic operations

FROM golang:1.22.0@sha256:a12b34... AS builder

WORKDIR /app

COPY go.mod go.sum ./

RUN go mod download

COPY . .

RUN CGO_ENABLED=0 GOOS=linux go build -trimpath -ldflags="-s -w" -o /app/server

FROM gcr.io/distroless/static@sha256:c56d78...

COPY --from=builder /app/server /server

USER nonroot:nonroot

ENTRYPOINT ["/server"]SLSA Framework (Supply-chain Levels for Software Artifacts)

SLSA (pronounced “salsa”) is a framework that defines levels of supply chain security assurance. It provides a common vocabulary and a progressive maturity model for organizations to improve their build integrity.

SLSA Levels

Level 0 — No Guarantees: No provenance, no build security requirements. This is the default state for most software. The consumer has no way to verify how the software was built, from what source, or by whom.

Level 1 — Provenance Exists:

- The build process generates provenance metadata documenting how the artifact was produced.

- A consistent, automated build process exists (not manual/ad-hoc).

- Protects against: Mistakes, human error in builds. Provides basic accountability.

- Requirement: Provenance generated by the build system, even if not signed.

Level 2 — Hosted Build, Signed Provenance:

- Builds run on a hosted build platform (not a developer laptop).

- Provenance is signed by the build platform, creating a tamper-evident record.

- Protects against: Post-build tampering. An attacker who gains access to artifact storage cannot modify artifacts without invalidating the signature.

- Requirement: Hosted build service, authenticated provenance signed by the build platform.

Level 3 — Hardened Builds:

- Build environment is hardened and isolated — builds cannot influence each other.

- Signing keys are inaccessible to the build process itself (managed by the platform).

- Build parameters are defined in version-controlled configuration (not passed as runtime parameters that could be manipulated).

- Protects against: Build-time threats and insider threats. Even a compromised build step cannot forge provenance for a different source.

- Requirement: Isolated, ephemeral build environments. Platform-managed signing. Parameters from version control.

Target Levels

- Minimum for production: SLSA Level 2. Every production artifact has signed provenance from a hosted build platform.

- High-assurance systems: SLSA Level 3. Financial systems, healthcare, defense, critical infrastructure.

- All organizations: Start at Level 1 (generate provenance) and progress to Level 2 (hosted builds, signed provenance) within one year.

Implementation

GitHub Actions, GitLab CI, and Google Cloud Build all support SLSA provenance generation:

# GitHub Actions: SLSA Level 3 provenance for container images

jobs:

build:

# ... build and push image ...

provenance:

needs: build

uses: slsa-framework/slsa-github-generator/.github/workflows/generator_container_slsa3.yml@v2.0.0

with:

image: ghcr.io/myorg/myapp

digest: ${{ needs.build.outputs.digest }}Software Bill of Materials (SBOM)

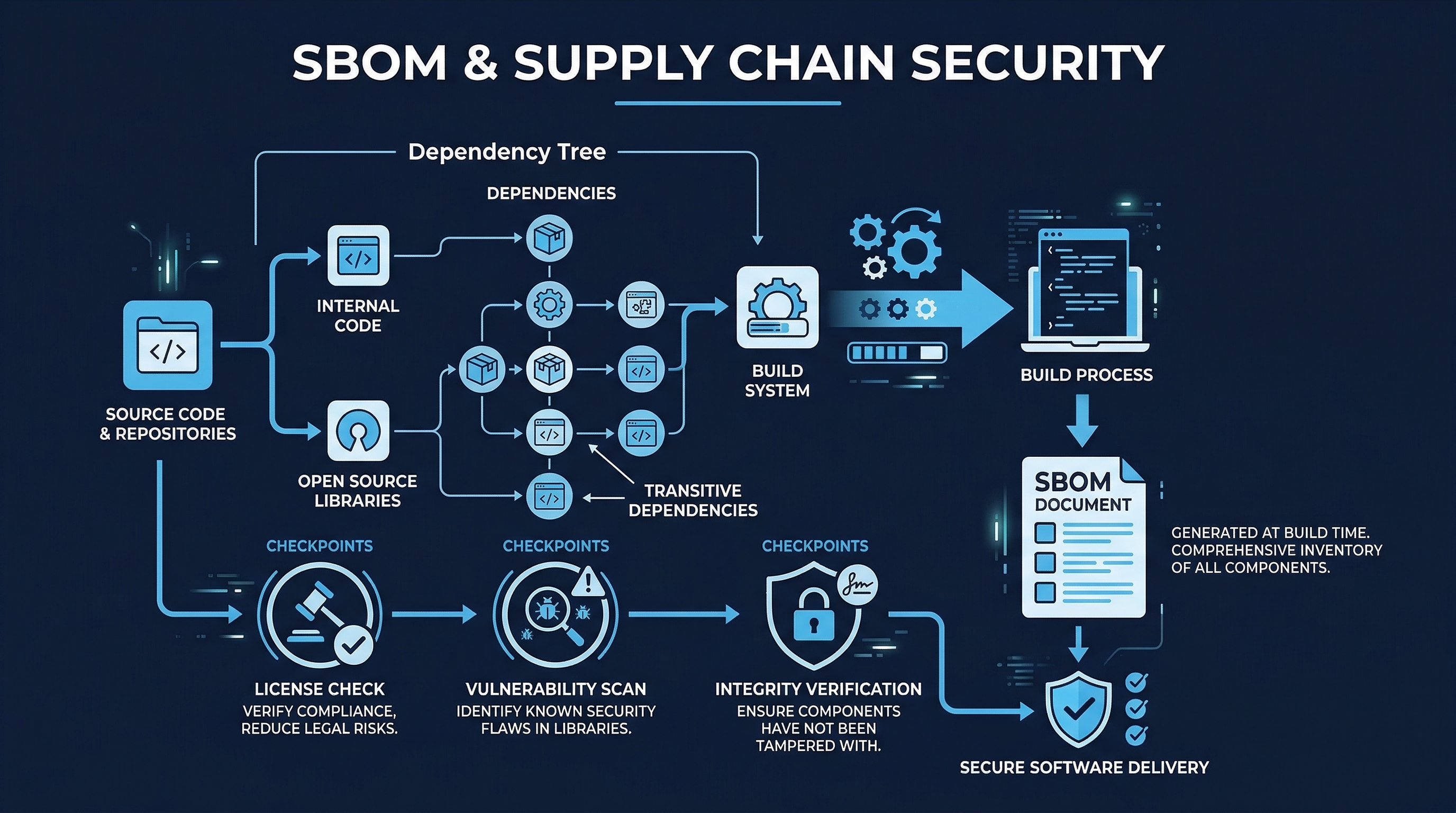

Figure: SBOM & Supply Chain Security — Software Bill of Materials components and supply chain transparency

Figure: SBOM & Supply Chain Security — Software Bill of Materials components and supply chain transparency

The SBOM is the definitive inventory of everything that constitutes a software product. It is to software what an ingredients list is to food — except that software ingredients can have their own ingredients, creating a recursive dependency tree that may be dozens of levels deep.

NTIA Minimum Elements

The National Telecommunications and Information Administration (NTIA) defined the minimum required elements for an SBOM:

| Element | Description | Example |

|---|---|---|

| Supplier Name | Entity that creates/distributes the component | ”Apache Software Foundation” |

| Component Name | Designation of the unit of software | ”log4j-core” |

| Version | Identifier used by the supplier | ”2.17.1” |

| Unique Identifier | Unique reference (CPE, PURL) | “pkg:maven/org.apache.logging.log4j/log4j-core@2.17.1” |

| Dependency Relationships | Which components depend on which others | ”myapp depends on log4j-core” |

| Author of SBOM Data | Who generated this SBOM | ”Syft v0.98.0” |

| Timestamp | When the SBOM was created | ”2026-03-19T10:30:00Z” |

2025 CISA Additions

CISA expanded the minimum elements to include:

- Component hash: Cryptographic hash (SHA-256) of the actual component binary/source, not just the version string. This enables verification that the component in use matches the cataloged version.

- License information: SPDX license identifiers for each component. Essential for license compliance and IP risk management.

- Tool name: The tool and version used to generate the SBOM. Different tools may produce different results; knowing the tool enables assessment of SBOM quality.

- Generation context: How the SBOM was generated (source analysis, binary analysis, build-time instrumentation) — each method has different accuracy characteristics.

SBOM Formats

SPDX (System Package Data Exchange):

- ISO/IEC 5962:2021 — an international standard.

- Originated from the Linux Foundation.

- Comprehensive license tracking capabilities.

- Formats: JSON, RDF, YAML, tag-value, XML.

- Stronger in license compliance use cases.

CycloneDX:

- OWASP project — designed for security use cases.

- Native support for vulnerability data, services, and ML/AI components.

- Formats: JSON, XML, Protocol Buffers.

- Stronger in vulnerability management and DevSecOps integration.

- Includes VEX (Vulnerability Exploitability eXchange) support for communicating vulnerability status.

Both formats are widely supported by tools and registries. Organizations should choose one as their standard and ensure consistent generation across all projects.

SBOM Generation

SBOMs must be generated automatically in CI for every release:

# Generate SBOMs in multiple formats during CI build

- name: Generate SBOM (CycloneDX)

run: syft . -o cyclonedx-json=sbom-cyclonedx.json

- name: Generate SBOM (SPDX)

run: syft . -o spdx-json=sbom-spdx.json

- name: Attach SBOM to Release

run: |

gh release upload $TAG sbom-cyclonedx.json sbom-spdx.json

- name: Store SBOM in Registry

run: |

cosign attach sbom --sbom sbom-cyclonedx.json $IMAGE@$DIGESTTools:

- Syft: Generates SBOMs from container images, file systems, and archives. Supports SPDX and CycloneDX output.

- Trivy: In addition to vulnerability scanning, generates SBOMs from images and repositories.

- CycloneDX CLI: Official CycloneDX generation tool with language-specific plugins.

- SPDX tools: Official SPDX generation and validation tools.

- Microsoft SBOM Tool (sbom-tool): Generates SPDX SBOMs with build-time integration.

SBOM Lifecycle

Generation is only the first step:

- Generate: Automatically during every CI build and release.

- Store: With the artifact in the artifact registry, signed and versioned.

- Distribute: Provide to consumers (customers, partners, regulators) alongside the software.

- Monitor: Continuously scan SBOMs against updated vulnerability databases. A component that was clean at build time may have new CVEs discovered later.

- Update: When components are updated, regenerate and distribute updated SBOMs.

- Archive: Retain historical SBOMs for compliance and forensics. If a vulnerability is discovered in a component, historical SBOMs reveal which releases are affected.

AI BOM: Extending SBOM for AI Systems

Traditional SBOMs were designed for conventional software — libraries, frameworks, and executables. They are not designed to capture the unique components of AI systems. As AI becomes embedded in more software products, a parallel inventory mechanism is required.

Why Traditional SBOMs Are Insufficient for AI

AI systems have components that do not map to traditional software concepts:

- Models are not libraries: An AI model is a trained artifact with specific weights, biases, and architectures. It has a training lineage (what data, what process, what hyperparameters) that affects its behavior and safety characteristics.

- Training data is not source code: The data used to train a model significantly influences its behavior, biases, and vulnerabilities. A model trained on poisoned data may produce correct results 99.9% of the time but contain a backdoor triggered by specific inputs.

- AI services are not APIs: Third-party AI services (OpenAI, Anthropic, Google) are components of the system, but they are opaque — you do not control their models, training data, or behavior changes.

- Retraining creates new versions: When a model is retrained, it is functionally a new component, even if the architecture is unchanged. The training process, data, and resulting weights all matter.

AI BOM Components

An AI BOM tracks:

| Component Type | What to Track | Why It Matters |

|---|---|---|

| AI Models/Weights | Model name, version, architecture, framework, size, quantization, fine-tuning lineage | A model update can change behavior, introduce bias, or create new vulnerabilities |

| Training Datasets | Source, size, preprocessing, filtering, labeling methodology, refresh schedule | Poisoned training data creates backdoors; biased data creates discriminatory outputs |

| Embeddings | Embedding model, dimension, training corpus, creation date | Embeddings encode assumptions about language and meaning that affect downstream behavior |

| Orchestration Layers | Framework (LangChain, LlamaIndex, Semantic Kernel), version, prompt templates | Orchestration vulnerabilities (prompt injection, tool abuse) are a distinct attack surface |

| Third-Party AI Services | Provider, model, API version, pricing tier, data handling policy | Provider changes can break functionality; data retention policies affect compliance |

| Retraining Processes | Schedule, trigger conditions, data pipeline, validation criteria | Retraining without validation can introduce regressions or incorporate poisoned data |

CISA AI SBOM Use Case Guide

CISA published the AI SBOM Use Case Guide to address this gap. Key guidance:

- AI SBOMs should extend, not replace, traditional SBOMs: The AI BOM is an additional layer that complements the standard software SBOM.

- Provenance for AI components: Document the full lineage of AI models — training data sources, preprocessing steps, training infrastructure, evaluation results.

- Transparency for AI services: When using third-party AI, document the provider, service level, data handling, and the organization’s assessment of the provider’s security practices.

Regulatory Landscape

SBOM requirements are becoming mandatory across jurisdictions:

- EU AI Act GPAI obligations: Effective August 2025, General-Purpose AI (GPAI) providers must maintain and provide detailed documentation about training data, model architecture, and known limitations. This is functionally an AI BOM requirement. Provenance audits are mandated.

- US Executive Order 14028: Requires SBOMs for software sold to the federal government.

- FDA: Requires SBOMs for medical device software.

- Multiple jurisdictions in 2026: SBOM mandates expanding across financial services (DORA in EU), critical infrastructure (NIS2 in EU), and government procurement globally.

The Visibility Gap

Despite growing requirements, organizational readiness lags:

- 25% of organizations are unsure which AI services or datasets are active in their environment. They literally do not know what AI components are in production.

- Only 24% apply comprehensive evaluations to AI-generated code. The output of AI coding assistants is entering production without the same scrutiny as human-written code or third-party libraries.

- AI model cards (documentation of model capabilities, limitations, and intended use) are inconsistently produced and rarely machine-readable.

Provenance Attestation

Provenance goes beyond signing — it documents the entire build process, creating an auditable record of how an artifact came to exist.

in-toto Framework

in-toto is a framework for securing the integrity of software supply chains by verifying that each step in the process was carried out as planned, by the right actor, producing the expected output:

- Layout: Defines the expected steps in the supply chain (clone, build, test, package, sign).

- Links: Each step produces a link file signed by the actor, documenting inputs and outputs.

- Verification: Consumers verify that all expected steps were completed, by authorized actors, in the correct order, with the expected inputs and outputs.

SLSA Provenance Predicates

SLSA defines a standard provenance format (based on in-toto):

{

"_type": "https://in-toto.io/Statement/v1",

"subject": [{

"name": "ghcr.io/myorg/myapp",

"digest": { "sha256": "abc123..." }

}],

"predicateType": "https://slsa.dev/provenance/v1",

"predicate": {

"buildDefinition": {

"buildType": "https://slsa-framework.github.io/github-actions-buildtypes/workflow/v1",

"externalParameters": {

"workflow": {

"ref": "refs/heads/main",

"repository": "https://github.com/myorg/myapp",

"path": ".github/workflows/release.yml"

}

},

"resolvedDependencies": [

{

"uri": "git+https://github.com/myorg/myapp@refs/heads/main",

"digest": { "gitCommit": "def456..." }

}

]

},

"runDetails": {

"builder": { "id": "https://github.com/slsa-framework/slsa-github-generator/.github/workflows/generator_container_slsa3.yml@refs/tags/v2.0.0" },

"metadata": {

"invocationId": "https://github.com/myorg/myapp/actions/runs/1234567890/attempts/1",

"startedOn": "2026-03-19T10:00:00Z",

"finishedOn": "2026-03-19T10:05:00Z"

}

}

}

}This provenance attestation tells a consumer: “This artifact was built from commit def456 of repository myorg/myapp on branch main, using the workflow at .github/workflows/release.yml, by the SLSA GitHub generator v2.0.0, on March 19, 2026.”

NIST SSDF PS.3 Alignment

NIST SP 800-218 (Secure Software Development Framework) Practice PS.3 requires organizations to:

- Archive and protect each software release: Maintain the ability to verify the integrity of any released version.

- Maintain provenance data: Document the origin and build process for all software components.

- Provide SBOMs to acquirers: Make SBOM data available to consumers of the software.

The controls in this module directly implement PS.3:

- Artifact signing and verification ensures integrity.

- SLSA provenance documents the build process.

- SBOM generation provides component inventory.

- AI BOM extends this to AI components.

Implementation Checklist

| Control | Priority | Status |

|---|---|---|

| SBOM generation automated in CI for every release | Critical | |

| SBOM includes NTIA minimum elements + CISA additions | Critical | |

| SBOMs stored with artifacts and distributed to consumers | High | |

| Continuous monitoring of SBOMs against vulnerability DBs | High | |

| All container images signed (Cosign/Sigstore) | Critical | |

| Admission controllers enforce signature verification | Critical | |

| SLSA Level 2 provenance for production artifacts | High | |

| Immutable builds: build once, promote everywhere | High | |

| Reproducible build capability for critical systems | Medium | |

| AI BOM tracking for AI components | High | |

| AI model provenance documented (data, training, evaluation) | High | |

| Third-party AI service inventory maintained | High | |

| SLSA Level 3 for high-assurance systems | Medium | |

| Historical SBOM archive maintained | Medium |

Key Takeaways

- You cannot secure what you cannot see: SBOMs are not bureaucratic overhead — they are the foundation of supply chain security. Without a complete inventory of components, vulnerability management is guesswork.

- Sign everything, verify everywhere: Artifact signing without verification at deployment is security theater. Both halves are required.

- SLSA provides a maturity path: Start at Level 1 (provenance exists), reach Level 2 (hosted builds, signed provenance) as the minimum for production, and target Level 3 for high-assurance systems.

- AI components need AI BOMs: Traditional SBOMs do not capture AI models, training data, or AI service dependencies. Extend your inventory practices to cover the AI supply chain.

- Regulatory momentum is accelerating: SBOM mandates are expanding across jurisdictions and industries. Building SBOM capabilities now avoids scrambling when compliance deadlines arrive.

- Immutable, reproducible builds are the goal: Build once, sign, promote. No post-build modification. Environment-specific config at deploy time. Reproducible builds enable independent verification.

References

- SLSA Framework: https://slsa.dev/

- Sigstore / Cosign: https://sigstore.dev/

- NTIA SBOM Minimum Elements: https://www.ntia.gov/sbom

- CISA SBOM Resources: https://www.cisa.gov/sbom

- CycloneDX: https://cyclonedx.org/

- SPDX: https://spdx.dev/

- in-toto Framework: https://in-toto.io/

- NIST SP 800-218 (SSDF): https://csrc.nist.gov/publications/detail/sp/800-218/final

- EU AI Act: https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Study Guide

Key Takeaways

- You cannot secure what you cannot see — SBOMs are the foundation of supply chain security, not bureaucratic overhead.

- Sign everything, verify everywhere — Artifact signing without verification at deployment is security theater; both halves are required.

- SLSA provides a maturity path — Level 1 (provenance exists), Level 2 (hosted builds + signed provenance), Level 3 (hardened builds with platform-managed keys).

- Build once, promote everywhere — Same bytes through all environments; environment config injected at deploy time, never baked in.

- AI components need AI BOMs — Traditional SBOMs do not capture models, training data, or AI service dependencies; 25% of orgs lack visibility into active AI services.

- Regulatory momentum is accelerating — EU AI Act, EU Cyber Resilience Act, US EO 14028, FDA all mandate SBOMs in various scopes.

- in-toto verifies the full supply chain — Layouts define expected steps; Links document each step’s inputs/outputs signed by actors.

Important Definitions

| Term | Definition |

|---|---|

| SLSA | Supply-chain Levels for Software Artifacts (pronounced “salsa”) — framework for build integrity levels |

| SBOM | Software Bill of Materials — definitive inventory of all components in a software product |

| CycloneDX | OWASP SBOM format designed for security use cases with VEX support |

| SPDX | ISO standard SBOM format from Linux Foundation, strong in license compliance |

| Cosign | Sigstore tool for signing and verifying container images and artifacts |

| Fulcio | Sigstore certificate authority issuing short-lived signing certificates via OIDC |

| Rekor | Sigstore immutable transparency log recording all signing events |

| in-toto | Framework verifying supply chain integrity through Layouts and Links |

| NTIA Minimum Elements | Supplier name, component name, version, unique ID, dependencies, author, timestamp |

| Immutable Build | Artifact built once, identified by SHA-256 hash, never modified post-build |

Quick Reference

- SLSA Targets: Minimum production = Level 2; High-assurance = Level 3; Start at Level 1 and progress within one year

- NTIA + CISA Elements: Original 7 (supplier, component, version, ID, deps, author, timestamp) + 2025 additions (hash, license, tool, context)

- Sigstore Ecosystem: Cosign (sign/verify) + Fulcio (CA) + Rekor (transparency log) + Gitsign (git commits) + Policy Controller (K8s admission)

- SBOM Lifecycle: Generate -> Store -> Distribute -> Monitor -> Update -> Archive

- Common Pitfalls: Generating SBOMs without continuous monitoring, signing without verification at deployment, missing AI components in inventory, using mutable tags instead of digests

Review Questions

- Explain the difference between SLSA Levels 1, 2, and 3 and what threat each level protects against.

- Why is “build once, promote everywhere” a security principle, not just a convenience?

- How does the in-toto framework detect if an attacker modifies an artifact between the build and packaging steps?

- Design an SBOM strategy that covers both traditional software components and AI models used in your application.

- What is the difference between SPDX and CycloneDX, and when would you choose one over the other?